Automation of technology has reshaped both the way in which we work and how we tackle problems. Thanks to the progress made in robotics and artificial intelligence (AI) over the last few years, it is now possible to leave several tasks in the hands to machines and algorithms.

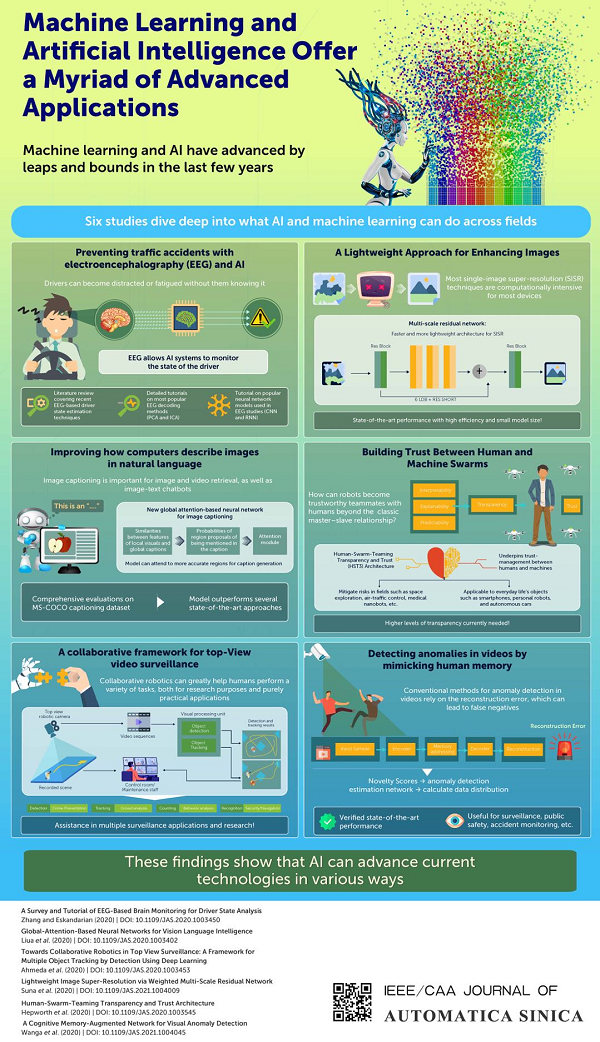

To highlight these advances, in the July 2021 issue, IEEE/CAA Journal of Automatica Sinica features six articles covering innovative applications of AI that can make our lives easier.

Information of the Papers

C. Zhang and A. Eskandarian, “A survey and tutorial of EEG-based brain monitoring for driver state analysis,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1222–1242, Jul. 2021.

http://www.ieee-jas.net/en/article/doi/10.1109/JAS.2020.1003450

P. Liu, Y. J. Zhou, D. Z. Peng, and D. P. Wu, “Global-attention-based neural networks for vision language intelligence,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1243–1252, Jul. 2021.

http://www.ieee-jas.net/article/doi/10.1109/JAS.2020.1003402?viewType=HTML&pageType=en

I. Ahmed, S. Din, G. Jeon, F. Piccialli, and G. Fortino, “Towards collaborative robotics in top view surveillance: A framework for multiple object tracking by detection using deep learning,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1253–1270, Jul. 2021.

http://www.ieee-jas.net/article/doi/10.1109/JAS.2020.1003453?viewType=HTML&pageType=en

L. Sun, Z. B. Liu, X. Y. Sun, L. C. Liu, R. S. Lan, and X. N. Luo, “Lightweight image super-resolution via weighted multi-scale residual network,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1271–1280, Jul. 2021.

http://www.ieee-jas.net/article/doi/10.1109/JAS.2021.1004009?pageType=en

A. J. Hepworth, D. P. Baxter, A. Hussein, K. J. Yaxley, E. Debie, and H. A. Abbass, “Human-swarm-teaming transparency and trust architecture,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1281–1295, Jul. 2021.

http://www.ieee-jas.net/article/doi/10.1109/JAS.2020.1003545?viewType=HTML&pageType=en

T. Wang, X. Xu, F. Shen, and Y. Yang, “A cognitive memory-augmented network for visual anomaly detection,” IEEE/CAA J. Autom. Sinica, vol. 8, no. 7, pp. 1296–1307, Jul. 2021.

http://www.ieee-jas.net/article/doi/10.1109/JAS.2021.1004045?viewType=HTML&pageType=en

The first article, authored by researchers from Virginia Tech Mechanical Engineering Department ASIM Lab, USA, delves into an interesting mixture of topics: intelligent cars, machine learning, and electroencephalography (EEG). Self-driving cars have been in the spotlight for a while. So how does EEG fit in this picture?

Sometimes drivers become distracted or fatigued without realizing it, increasing the risk of a traffic accident. Fortunately, cars can now be equipped with AI systems that sense and analyze the driver’s EEG signals to constantly monitor their state and issue warnings when deemed necessary. This article reviews the latest EEG-based driver state estimation techniques. They also provide detailed tutorials on the most popular EEG decoding methods and neural network models, helping researchers become familiarized with the field. The authors explain, “By implementing these EEG-based methods, drivers’ state can me estimated more accurately, improving road safety.”

Next, scientists from Sichuan University, China and University of Florida, USA, propose a new approach for image captioning, a task that is difficult for computers. The problem is that even though computers can now aptly recognize objects in a given image, it is tricky to describe the scene solely based on these objects. To tackle this, the researchers developed a global attention-based network to accurately estimate the probabilities of a given region in the image of being mentioned in the caption. This was achieved by analyzing the similarities between local visual features and global caption features. Using an attention module, the model can more accurately attend to the most important regions in the image to produce a good caption. Automatic image captioning is a great tool for indexing large images datasets and helping the visually impaired.

In the third article, scientists of Institute of Management Sciences, Pakistan, Yeungnam University, South Korea, Xidian University, China, and University of Naples Federico II, University of Calabria, Italy attempt to bring collaborative robotics to the field of top-view surveillance. More specifically, they propose a detailed framework in which deep learning is used in top-view computer vision, contrary to most studies that focus on frontal-view images. This framework uses a smart robot camera with an embedded visual processing unit with deep-learning algorithms for detection and tracking of multiple objects (essential tasks in various applications, including crime prevention and crowd and behavior analysis).

In the fourth article, researchers from Guilin University of Electronic Technology, Hunan University, China, propose a new approach for producing super-resolution images based on features that a neural network can extract and use. Their method, called weighted multi-scale residual network, can leverage both global and local image features from different scales to reconstruct high-quality images with state-of-the-art performance. The authors say, “Current imaging devices certainly cannot provide enough computing resources, and thus, we designed a fast and lightweight architecture to mitigate this problem.”

The fifth article by researchers from the University of New South Wales, Australia, covers the complex topic of transparency and trust in human–swarm teaming. According to the authors, explainability, interpretability and predictability are distinct yet overlapping concepts in artificial intelligence that are subordinate to transparency. By drawing from the literature, they proposed an architecture to ensure trustworthy collaboration between humans and machine swarms, going beyond the usual master–slave paradigm. The researchers conclude, “Human-swarm teams will require increased levels of transparency before we can begin to leverage the opportunity that these systems present.”

The last, scientists from the University of Electronic Science and Technology of China showcase yet another use of deep neural networks in the field of computer vision— more specifically, in video anomaly detection. Existing models for automatically detecting anomalies in video footage try to predict or reconstruct a frame based on previous input and, by calculating the reconstruction error, determine if anything seems out of place. The problem with this approach is that abnormal frames are sometimes reconstructed well, leading to false negatives. The scientists tackled this problem by developing a cognitive memory-augmented network that imitates the way in which humans remember normal samples and uses both reconstruction error and calculated novelty scores to detect anomalies in videos. With verified state-of-the-art performance, the network can be readily applied in surveillance tasks, such as accident and public safety monitoring.

We are all very likely to witness artificial intelligence becoming pivotal in many real-life applications soon. So, make sure to keep up with the times by checking out the July 2021 issue of IEEE/CAA Journal of Automatica Sinica!

E-mail Alert

E-mail Alert