Volume 11

Issue 7

Volume 11

Issue 7

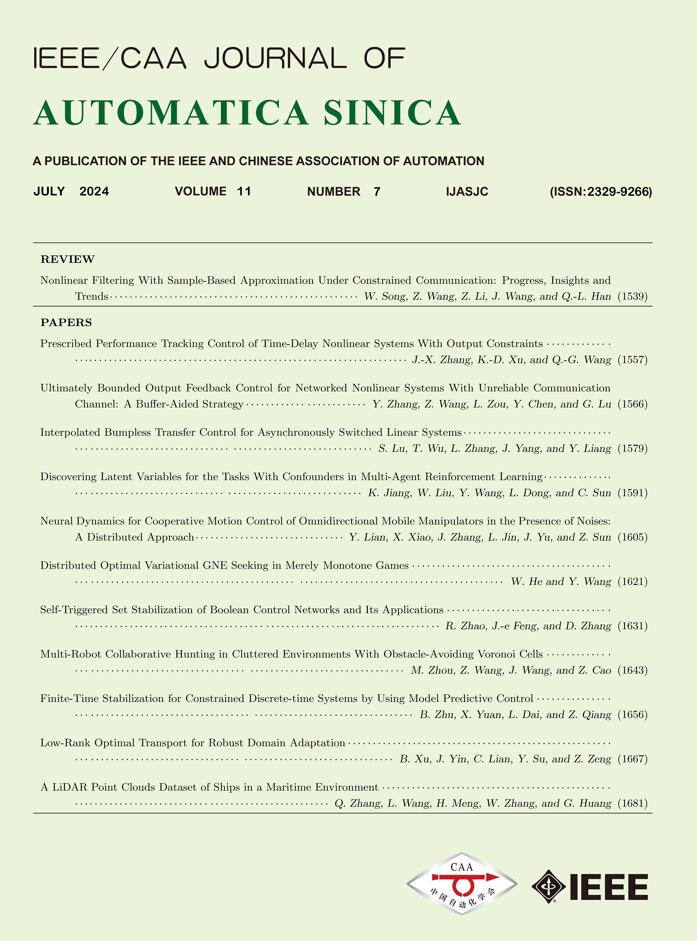

IEEE/CAA Journal of Automatica Sinica

| Citation: | Y. Lin, G. Hu, L. Wang, Q. Li, and J. Zhu, “A multi-AGV routing planning method based on deep reinforcement learning and recurrent neural network,” IEEE/CAA J. Autom. Sinica, vol. 11, no. 7, pp. 1720–1722, Jul. 2024. doi: 10.1109/JAS.2023.123300 |

| [1] |

E. H. Grosse, C. H. Glock, and W. P. Neumann, “Human factors in order picking: A content analysis of the literature,” Int. J. Production Research, vol. 55, no. 5, pp. 1260–1276, May 2017. doi: 10.1080/00207543.2016.1186296

|

| [2] |

Y. Lin, Y. Xu, J. Zhu, et al., “MLATSO: A method for task scheduling optimization in multi-load AGVs-based systems,” Robotics and Computer-Integrated Manufacturing, vol. 79, p. 102397, Feb. 2023.

|

| [3] |

H. Yoshitake, R. Kamoshida, and Y. Nagashima, “New automated guided vehicle system using real-time holonic scheduling for warehouse picking,” IEEE Robotics and Auto. Letters, vol. 4, no. 2, pp. 1045–1052, Apr. 2019. doi: 10.1109/LRA.2019.2894001

|

| [4] |

M. Cardona, A. Palma, and J. Manzanares, “COVID-19 pandemic impact on mobile robotics market,” in Proc. IEEE ANDESCON, Dec. 2020, pp. 1–4.

|

| [5] |

K. Guo, J. Zhu, and L. Shen, “An improved acceleration method based on multi-agent system for AGVs conflict-free path planning in automated terminals,” IEEE Access, vol. 9, pp. 3326–3338, Dec. 2021. doi: 10.1109/ACCESS.2020.3047916

|

| [6] |

Y. Lian, W. Xie, and L. Zhang, “A probabilistic time-constrained based heuristic path planning algorithm in warehouse multi-AGV systems,” IFAC-PapersOnLine, vol. 53, no. 2, pp. 2538–2543, Jan. 2020. doi: 10.1016/j.ifacol.2020.12.293

|

| [7] |

Y. Yang, J. Zhang, Y. Liu, and X. Song, “Multi-AGV collision avoidance path optimization for unmanned warehouse based on improved ant colony algorithm,” Communications in Computer and Information Science, vol. 1159, pp. 527–537, Apr. 2020.

|

| [8] |

T. Lu, Z. Sun, S. Qiu, and X. Ming, “Time window based genetic algorithm for multi-AGVs conflict-free path planning in automated container terminals,” in Proc. IEEE Int. Conf. Industrial Engineering and Engineering Management, Jan. 2021, pp. 603–607.

|

| [9] |

Y. Zheng, L. Wang, P. Xi, et al., “Multi-agent path planning algorithm based on ant colony algorithm and game theory,” J. Computer Applications, vol. 39, no. 3, pp. 681–687, May 2019.

|

| [10] |

L. Z. Du, S. Ke, Z. Wang, et al., “Research on multi-load AGV path planning of weaving workshop based on time priority,” Math. Biosci. Eng., vol. 16, no. 4, pp. 2277–2292, Mar. 2019. doi: 10.3934/mbe.2019113

|

| [11] |

S. Li, J. Zhang, and B. Zheng, “Research on obstacle avoidance strategy of grid workspace based on deep reinforcement learning,” in Proc. 2nd Asia-Pacific Conf. Communications Technology and Computer Science, Feb. 2022, vol. 2022, pp. 11–15.

|

| [12] |

Y. Yang, J. Li, and L. Peng, “Multi-robot path planning based on a deep reinforcement learning DQN algorithm,” CAAI Trans. Intelligence Technology, vol. 5, no. 3, pp. 177–183, Jun. 2020. doi: 10.1049/trit.2020.0024

|

| [13] |

G. Shen, R. Ma, Z. Tang, et al., “A deep reinforcement learning algorithm for warehousing multi-AGV path planning,” in Proc. Int. Conf. Networking, Communications and Information Technology, Dec. 2021, pp. 421–429.

|

| [14] |

P. A. Corrales and F. A. Gregori, “Swarm AGV optimization using deep reinforcement learning,” in Proc. 3rd Int. Conf. Machine Learning and Machine Intelligence, Dec. 2020, pp. 65–69.

|

| [15] |

L. Jiang, H. Huang, and Z. Ding, “Path planning for intelligent robots based on deep Q-learning with experience replay and heuristic knowledge,” IEEE/CAA J. Autom. Sinica, vol. 7, no. 4, pp. 1179–1189, Jul. 2020. doi: 10.1109/JAS.2019.1911732

|

| [16] |

N. Thumiger and M. Deghat, “A multi-agent deep reinforcement learning approach for practical decentralized UAV collision avoidance,” IEEE Control Systems Letters, vol. 6, pp. 2174–2179, Dec. 2022. doi: 10.1109/LCSYS.2021.3138941

|

| [17] |

C. Piao and C. Liu, “Energy-efficient mobile crowdsensing by unmanned vehicles: A sequential deep reinforcement learning approach,” IEEE Internet Things J., vol. 7, no. 7, pp. 6312–6324, Jul. 2020. doi: 10.1109/JIOT.2019.2962545

|