2. Beijing Aerospace Times Optical-electronic Technology Co., Ltd(ATOT), Beijing 100094, China

WITH the ability to take off and land vertically,along with hovering ability,the small unmanned aerial rotorcraft (SUAR) has an irreplaceable role in civil applications[1]. Thus,it has been widely used in many regions,such as road traffic monitoring,data acquisition,mapping and surveillance,etc[2, 3, 4]. And it allows us to easily access environments to which no humans or other vehicles can get access.

Now most navigation systems for SUAR are based on inertial sensors (gyroscopes and accelerometer) and GPS[5, 6]. However,buildings and other obstacles in urban environments can easily disturb the GPS signal and even cause the signal to get lost due to electromagnetic noise from active emitters and multi-path effects. And it directly leads to the poor performance of SUAR as a result of the inertial navigation errors generated by the gyroscope drifts and accelerometer biases without GPS. So there is a requirement that the vehicle is able to navigate without using GPS to guarantee the safety of operations in unknown,unstructured and GPS-denied environments.

Considering the problem mentioned above,a feasible solution is to navigate the vehicle using a system based on vision. Small cameras which are low-lost,light-weight and passive are attractive sensors for SUAR. Furthermore,a vision system is self-sufficient,and provides position values which are much more accurate than the standard GPS$'$s (cm accuracy). Off-board cameras for motion capture systems are well studied[7, 8]. However,the cameras can be used only in small environments that are accessible physically by humans,and the cameras$'$s field of view directly constrains the operation of the helicopter. Therefore,it is necessary to install the camera onboard. Saripalli used vision for the precise target detection and recognition and the navigation successfully in combination with GPS[9]. Moreover,Hermansson established an EKF model to fuse the measurements of vision,GPS and compass,and realized a landing within 0.5m in the horizontal direction[10]. Obviously,these systems still relies on the GPS signal. The stereo vision is also used to detect the safe landing area and to achieve soft landing[11, 12]. However,the range data become inaccurate when the distance to the scene is much larger than the baseline[13].

In this paper,we propose an autonomous landing method for SUAR to tackle the problem of poor navigation in GPS-denied environment by using a single camera and onboard inertial sensors. The position and attitude of the SUAR are estimated by the vision system using a specially designed landmark firstly. Then a wavelet-adaptive KF is developed by fusing the position estimated by the vision system with inertial data to improve the performance of SUAR.

The paper is organized as follows: The image processing algorithm is described in Section II. In Section III,an adaptive-KF based on wavelet filter is proposed to get high-precision estimated values. Simulation and test results confirm the effectiveness of the proposed method in Section IV. Finally,conclusions are drawn in Section V.

II. THE DESCRIPTION OF VISION SYSTEMTo land successfully,two basic stages are required by the visual navigation system for SUAR: the landmark detection and the accurate estimation of position and attitude for SUAR.

A. Landmark Detection Based on SVMAs a basic step of a visual navigation system,usually there are three methods for landmark detection. Special landmarks are identified easily,but this approach cannot be applied commonly. Besides,pattern matching[14] is a mostly used way,too. However,the main shortcomings are the large calculation and vast experiments are needed when dividing the similarity threshold. Machine learning[15, 16] is recognized as the most intelligent method,and can be used commonly to detect any landmark.

In order to significantly improve the effect of landmark detection,an innovative landmark detection technique was studied and finally specified. Its support vector machine (SVM) takes advantage of the Hu invariant moments[17].

A series of Hu invariant moments of several images of landmark are used as input to the SVM,which now is widely used for detection purposes and is based on a training set of elements. Basically,the SVM technique aims to geometrically separate the training set represented in a $n$ space,with $n$ standing for the number of criteria taken into account for classification,using a hyper-plane or some more complex surface if necessary. The SVM training algorithm finds out the best frontier in order to maximize the margin,defined as a symmetric zone centered on the frontier with no training points included,and to minimize the number of wrong classification occurrences,as which can be shown in Fig. 1.

|

Download:

|

| Fig. 1. SVM detection in the two-dimensional space. | |

The output of SVM training stage is thus the hyper-plane equation[18]

| $\begin{align} \left\langle {\vec w,\vec x} \right\rangle + b = 0, \end{align}$ | (1) |

where $\vec x$ is the $n$ components vector representing the image to be classified. It should be noted that the normal vector $\vec w$ is a linear combination of a reduced set of training vectors $\vec x$,located nearby the two parallel hyper-planes defining the margin: points located far from the margin have no contribution in the hyper-plane definition.

Then the classification algorithm is straight forward: if $\vec y_i \left( {\left\langle {\vec w,\vec x_i } \right\rangle + b} \right) - 1 \ge 0$,then the corresponding mark is classified as landmark-free.

When the linear separation is not efficient,it often proves to become linear when applying some non-linear transform on the coordinate,and SVM technique would be applied to achieve efficiently this non-linear transform using kernels.

In addition,the SVM convex programming problem can be converted to a dual problem using Lagrange method. That is,a conditional extremum can be constructed as $\min \left\| w \right\|^2 /2$,$ {\rm s.t.}\quad \vec y_i \left( {\left\langle {\vec w,\vec x_i } \right\rangle + b} \right) - 1 \ge 0$. Here,we use the Lagrange method to convert it to $L\left( {w,b,\alpha _i } \right) = \left\| w \right\|^2 /2 - \sum\nolimits_{i = 1}^l {\alpha _i y_i \left( {\left\langle {\vec w,\vec x_i } \right\rangle + b} \right) + \sum\nolimits_{i = 1}^l {\alpha _i } } ,\alpha _i \ge 0$,which can be defined as $\mathop {\min }\nolimits_{w,b} \mathop {\max }\nolimits_{\alpha _i \ge 0} L\left( {w,b,\alpha _i } \right)$. Then,the expression above can be transformed through dualistic transformation as $\mathop {\max }\nolimits_{\alpha _i \ge 0} \mathop {\min }\nolimits_{w,b} L\left( {w,b,\alpha _i } \right)$. Then the problem can be resolved using a series of numerical method[19].

As the input to SVM,the first,second and third moments of inertia are testified to be sufficient to distinguish between the landing target and other objects presented in the image. The moments of inertia can be shown as

| $\begin{align} \left\{ \begin{array}{l} \phi _1 = \eta _{20} + \eta _{02},\\ \phi _2 = \left( {\eta _{20} - \eta _{02} } \right) + 4\eta _{11}^2,\\ \phi _3 = \left( {\eta _{30} - 3\eta _{12} } \right)^2 + \left( {3\eta _{21} - \eta _{03} } \right)^2,\\ \end{array} \right. \end{align}$ | (2) |

where $\phi _1 ,\phi _2 ,\phi _3$ are the three lower order invariant moments,and $\eta _{pq}$ is the normalized central moment which can be defined as

| $\begin{align} \eta _{pq} = \frac{{\mu _{pq} }}{{\eta _{00}^\gamma }}, \end{align}$ | (3) |

where $\gamma = \frac{{p + q}}{2} + 1$ ,$p + q = 2,3,\cdots$ and $ \mu _{pq}$ represents the central moment of an object,which is given by

| $\begin{align} \mu _{pq} = \int_{ - \infty }^\infty {\int_{ - \infty }^\infty {\left( {x - \bar x} \right)^p \left( {y - \bar y} \right)^q f\left( {x,y} \right){\rm d}x{\rm d}y} }, \end{align}$ | (4) |

where $\left( {\bar x,\bar y} \right)$ represents the center of the gravity of the object,$f\left( {x,y} \right)$ represents a two-dimensional object as a continuous function with $ p,q = 0,1,2,\cdots$.

B. State Estimation Based on VisionWe have chosen black triangles on white background as they are fast to detect and provide accurate image features. When the landmark is detected in the current image,corners are extracted by the Kitchen and Rosenfild algorithm. Then from the projection of the corner points of the target landmark,the attitude and position of the SUAR is uniquely determined through the following equations,if all intrinsic camera parameters are known.

| $\begin{align} z_c \left[{\begin{array}{*{20}c} u \\ v \\ 1 \\ \end{array}} \right] = {\pmb{A}}\left[{\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]\left[{\begin{array}{*{20}c} {x_{\rm{w}} } \\ {y_{\rm{w}} } \\ {z_{\rm{w}} } \\ 1 \\ \end{array}} \right], \end{align}$ | (5) |

where $A = \left[{\begin{array}{*{20}c} {f_x } & 0 & {u_0 } \\ 0 & {f_y } & {v_0 } \\ 0 & 0 & 1 \\ \end{array}} \right]$ is the intrinsic camera parameters matrix,${\pmb{R}} = \left[{\begin{array}{*{20}c} {\sin \psi \cos \theta } \\ {\cos \psi \cos \theta } \\ {\sin \theta } & \\ \end{array}} \right.\;$ $\left. {\begin{array}{*{20}c} {\cos \psi \cos \varphi + \sin \psi \sin \theta \sin \varphi } & { - \cos \psi \sin \varphi + \sin \psi \sin \theta \cos \varphi } \\ { - \sin \psi \cos \varphi + \cos \psi \sin \theta \sin \varphi } & {\sin \psi \sin \varphi + \cos \psi \sin \theta \cos \varphi } \\ { - \cos \theta \sin \varphi } & { - \cos \theta \cos \varphi } \\ \end{array}} \right]\;$ represents the attitude matrix of the SUAR,and $\varphi$,$\theta$ and $\psi$ are roll,pitch and yaw angle,respectively,${\pmb{p}}$ is the translation vector,$\left( {x_{\rm{w}} ,y_{\rm{w}} ,z_{\rm{w}} } \right)$ and $\left( {x_{\rm{c}} ,y_{\rm{c}} ,z_{\rm{c}} } \right)$ are the coordinates of corner points in the navigation system and camera coordinate system respectively,and $\left( {u,v} \right)$ is the coordinates of corner points in the pixel coordinate system.

Suppose that the landmark is detected,and the normalized coordinates of the corner in the navigation system can be represented as ${\pmb{q}}_i \left( {i = 1,2,\cdots ,12} \right)$ (12 points are used in this research),and the corresponding coordinates in the image plane is ${\pmb{x}}_i = [\begin{array}{*{20}c} u & v & 1 \\ \end{array}]^{\rm T} \left( {i = 1,2,\cdots ,12} \right)$; then the simplified form of (5) can be expressed as follows

| $\begin{align} \lambda _i {\pmb{x}}_i = {\pmb{A}} \cdot \left[ {\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]{\pmb{q}}_i, \end{align}$ | (6) |

where $\lambda _i$ is equal to $z_{\rm{c}}$,and thus we can obtain that $\lambda _i = {\pmb{e}}_3^{\rm T} \left[{\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]{\pmb{q}}_i$,where ${\pmb{e}}_3^{\rm T} = \left[{\begin{array}{*{20}c} 0 & 0 & 1 \\ \end{array}} \right]$.

If ${\pmb{y}}_i = {\pmb{A}}^{ - 1} {\pmb{x}}_{i}$,then we can define Formula (6) as

| $\begin{align} \left( {{\pmb{y}}_i {\pmb{e}}_3^{\rm T} - {\pmb{I}}} \right)\left[{\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]{\pmb{q}}_i = 0. \end{align}$ | (7) |

Considering the landing area is a plane,all the corners in the navigation coordinates satisfy ${\pmb{e}}_{{3}}^{\rm T} {\pmb{q}}_i = 0$. For $\left[{\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]{\pmb{ = }}\left[{\begin{array}{*{20}c} {{\pmb{r}}_{1} } & {{\pmb{r}}_{2} } & {{\pmb{r}}_{3} } & {\pmb{p}} \\ \end{array}} \right]$and ${\pmb{q}}_i = \left[{\begin{array}{*{20}c} {q_{i1} } & {q_{i2} } & {q_{i3} } & 1 \\ \end{array}} \right]$,${\pmb{r}}_3$ and $q_{i3}$ can be removed,and (7) can be transformed into

| $\begin{align} {\pmb{F}}\left[\begin{array}{l} {\pmb{r}}_{1} \\ {\pmb{r}}_{2} \\ {\pmb{p}} \\ \end{array} \right] = 0, \end{align}$ | (8) |

where ${\pmb{F = }}\left[{{\pmb{F}}_{1}^{\rm T} \cdots {\pmb{F}}_{{12}}^{\rm T} } \right]^{\rm T}$,and ${\pmb{F}}_i =\\ \left[{\begin{array}{*{20}c} \!\!\!{q_{i1} }\!\!\!&0\!\!\!\!&\!\!\!{ - q_{i1} y_{i1} } & {q_{i2} } & 0&{ - q_{i2} y_{i1} } & 1 & 0 & { - y_{i1} } \\ 0 & {q_{i1} } & { - q_{i1} y_{i2} } & 0 &{q_{i2} } & { - q_{i2} y_{i2} } & 0 & 1 & { - y_{i2} } \\ \end{array}} \right]$.

Then singular value vector $\left[{\begin{array}{*{20}c} {{\tilde{\pmb r}}_1^{\rm T} } & {{\tilde{\pmb r}}_{2}^{\rm T} } & {{\tilde{\pmb p}}^{\rm T} } \\ \end{array}} \right]^{\rm T}$ can be calculated by singular value decomposition(SVD). And then the translation vector ${\pmb{p}}$ can be estimated as

| $\begin{align} {\pmb{p}} = \frac{{2{\tilde{\pmb p}}}}{{\left\| {{\pmb{r}}_{1} } \right\| + \left\| {{\pmb{r}}_{2} } \right\|}}, \end{align}$ | (9) |

and ${\pmb{r}}_3 = {\pmb{r}}_{1} \times {\pmb{r}}_{2}$. Finally,the attitude of the SUAR can be obtained from ${\pmb{R}}$.

Through the linear algorithm above,9 variables (${\pmb{r}}_{1} ,{\pmb{r}}_{2} ,{\pmb{p}}$) composed of attitude and position parameters $\varphi ,\theta ,\psi ,p_1 ,p_2 ,p_3$ can be estimated. It is clear that the 9 variables contain much noise,but they can be used as initial values of the non-linear algorithm below.

The estimation from the non-linear algorithm is optimized by minimizing the reprojection error $G$ as below

| $\begin{align} \left\{ {\begin{array}{*{20}c} {{\pmb{G}} = \left[{{\pmb{G}}_{1}^{\rm T} \cdots {\pmb{G}}_{{12}}^{\rm T} } \right]^{\rm T} \quad \quad \quad } \\ {{\pmb{G}}_i = \left( {{\pmb{y}}_i {\pmb{e}}_3^{\rm T} - {\pmb{I}}} \right)\left[{\begin{array}{*{20}c} {\pmb{R}} & {\pmb{p}} \\ \end{array}} \right]{\pmb{q}}_i } \\ \end{array},~~i = 1,\cdots,12.} \right. \end{align}$ | (10) |

Then the Jacobian matrix can be shown as ${\pmb{D}}_{\rm{J}} = \left[{\begin{array}{*{20}c} {\frac{{\partial {\pmb{G}}_1 }}{{\partial \varphi }}} & {\frac{{\partial {\pmb{G}}_1 }}{{\partial \theta }}} & {\frac{{\partial {\pmb{G}}_1 }}{{\partial \psi }}} & {\frac{{\partial {\pmb{G}}_1 }}{{\partial p_1 }}} & {\frac{{\partial {\pmb{G}}_1 }}{{\partial p_2 }}} & {\frac{{\partial {\pmb{G}}_1 }}{{\partial p_3 }}} \\ \cdots & \cdots & \cdots & \cdots & \cdots & \cdots \\ {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial \varphi }}} & {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial \theta }}} & {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial \psi }}} & {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial p_1 }}} & {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial p_2 }}} & {\frac{{\partial {\pmb{G}}_{{1}2} }}{{\partial p_3 }}} \\ \end{array}} \right]$.

Therefore,the attitude and position parameters based on Newton method can be expressed as

| $\begin{align} {\pmb{\beta }}_{k + 1} = {\pmb{\beta }}_k - k\left( {{\pmb{D}}_{\rm{J}} } \right)^{ - 1} {\pmb{G}}, \end{align}$ | (11) |

where ${\pmb{\beta }} = \left[{\begin{array}{*{20}c} \varphi & \theta & \psi & {p_1 } & {p_2 } & {p_3 } \\ \end{array}} \right]^{\rm T}$,and $k$ is the step size.

III. SENSOR FUSIONThe attitude and position information from the vision system cannot be fed back to the controller directly because of their lack of robustness.

Therefore,a filter based on Kalman filter (KF) is developed to fuse highly accurate position estimated from vision system with inertial data from the inertial measurement unit (IMU,including angular rate gyroscope and accelerometers). Not only can the filter filter out most of the noise,but it can also provide sufficient information to complete the task when the vision system is disturbed.

A. The State and Observation Model of SUARThe KF is done using the error state space as follows

| $\begin{align} \left\{ {\begin{array}{*{20}ll} {\delta {\dot{\pmb r}} = - {\pmb{\omega }}_{{\rm{en}}} \times \delta {\pmb{r}} + \delta {\pmb{V}},} \\ {\delta {\dot{\pmb V}} = - \left( {{\pmb{\omega }}_{{\rm{ie}}} {+{\pmb \omega }}_{{\rm{in}}} } \right){\times\pmb{ \delta V}} - {\pmb{\Psi }} \times {\pmb{f}} + \delta {\pmb{g}} + \nabla,} \\ {{\dot{\pmb \Psi }} = - {\pmb{\omega }}_{{\rm{in}}} \times {\pmb{\Psi }} + {\pmb{\varepsilon }}.} \\ \end{array}} \right. \end{align}$ | (12) |

We can conclude the discrete state equation ${\pmb{X}}_k = {\pmb{\Phi }}_{k,k - 1} {\pmb{X}}_{k - 1} + {\pmb{W}}_{k - 1}$. In (12),$\delta {\pmb{r}}$,$\delta {\pmb{V}}$ and ${\pmb{\Psi }}$ are the error vectors of the position,velocity and attitude,${\pmb{\omega }}_{{\rm{ie}}}$ is the Earth rotation rate,${\pmb{f}}$ is the accelerometers outputs,$\nabla$ is the bias of accelerometer,${\pmb{\varepsilon }}$ is the zero drift of gyroscope,${\pmb{X}}_k$ is the state vector,${\pmb{\Phi }}_{k,k - 1}$ is the state transition matrix and ${\pmb{W}}_k$ represents the system noise.

The three observations are obtained by the difference between the position from vision system and the position from INS. Then the observational equation can be given as

| $\begin{align} {\pmb{Z}}_k = \left[{{\pmb{P}}_{{\rm{INS}}} - {\pmb{P}}_{{\rm{VIS}}} } \right]^{\rm T} = {\pmb{H}}_k {\pmb{X}}_k + {\pmb{V}}_k, \end{align}$ | (13) |

where ${\pmb{Z}}_k$ is the observation vector and ${\pmb{V}}_k$ is the observation noise.

B. The Wavelet-adaptive KF1) The wavelet filter method

Sensor noises are always treated as zero mean Gaussian white noise in traditional Kalman filter. However,to get high precision position and attitude information,it$'$s necessary to get rid of high frequency noises in the sensors (SINS and vision system). The common ways to deal with the high frequency noises include the data smoothing filter,infinite impulse response (IIR) filter,finite impulse response (FIR) filter and wavelet filter. The data smoothing can eliminate the measurement outlier and noise for the high frequency data,but it has high requirement for the sensor data collection system. Although the IIR can eliminate the high frequency noise,it can cause a phase delay. The FIR can reduce the noise signal energy,but it has limitation in the high frequency noise suppression. Wavelet filter is a time domain and frequency domain method,having good representation for the partial signal characteristic. In the low frequency region,it has a higher frequency resolution and lower time resolution. On the other hand,it has higher time resolution and a lower frequency resolution in the high frequency region. Therefore,wavelet filter is used as a tool to reduce high frequency noises in the sensor information.

We can suppose the data with noise as

| $\begin{align} f\left( t \right) = g\left( t \right) + \varepsilon \left( t \right), \end{align}$ | (14) |

where $f\left( t \right)$ and $g\left( t \right)$ represent the real signal and noise respectively. Besides,they are independent of each other,and $\varepsilon \left( t \right) \in {\rm N}\left( {0,\sigma ^2 } \right)$.

The process of wavelet decomposition and reconstruction scheme includes three steps:

a) Wavelet decomposition

| $\begin{align} \left\{ {\begin{array}{*{20}ll} {Split\left( {s^j } \right) = \left( {e_{j - 1} ,o_{j - 1} } \right),} \\ {e_{j - 1} = \left\{ {e_{j - 1,k} = s_{j,2k} } \right\},o_{j - 1} = \left\{ {o_{j - 1,k} = s_{j,2k} } \right\}.} \\ \end{array}} \right. \end{align}$ | (15) |

b) Prediction: defining the detailed representation characteristics by choosing a predictor.

| $\begin{align} O_{j - 1} - = P(E_{j - 1} ). \end{align}$ | (16) |

c) Update: averaging the signal of rough representation against the original signal.

| $\begin{align} E_{j - 1} + = U(O_{j - 1} ). \end{align}$ | (17) |

2) AKF description

The Kalman filter is a set of mathematical equations that use an underlying process model to estimate the current system state and correct the estimated value. Using this predictor-corrector mechanism,it can approximate an optimal estimation from the linearization of the process and measurement models.

Then the filter consists of the following stages:

a) State prediction

| $\begin{align} {\hat{\pmb X}}_{k/k - 1} = {\pmb{\Phi }}_{k,k - 1} {\hat{\pmb X}}_{k - 1}. \end{align}$ | (18) |

b) Measurement prediction

| $\begin{align} {\hat{\pmb Z}}_{k/k - 1} = {\pmb{H}}_k {\hat{\pmb X}}_{k/k - 1}. \end{align}$ | (19) |

c) Updated state estimation

| $\begin{align} {\hat{\pmb X}}_k = {\hat{\pmb X}}_{k/k - 1} + {\pmb{K}}_k \left( {{\pmb{Z}}_k - {\hat{\pmb Z}}_{k/k - 1} } \right). \end{align}$ | (20) |

d) Gain of the filter

| $\begin{align} {\pmb{K}}_k = {\pmb{P}}_{k/k - 1} {\pmb{H}}_k^{\rm T} \left[ {{\pmb{H}}_k {\pmb{P}}_{k/k - 1} {\pmb{H}}_k^{\rm T} + {\pmb{R}}_k } \right]^{ - 1}. \end{align}$ | (21) |

e) Error covariance

| $\begin{align} {\pmb{P}}_{k/k - 1} = {\pmb{\Phi }}_{k/k - 1} {\pmb{P}}_{k - 1} {\pmb{\Phi }}_{k/k - 1}^{\rm T} + {\pmb{Q}}_{k - 1}. \end{align}$ | (22) |

f) Variance matrix of the observation noise

Considering that the noise structure has changed after wavelet filtering,we cannot simply use experiential value or the statistics of partial noise as the observation noise variance; here the observation noise variance is estimated using the maximum by a posteriori adaptive method.

| $\begin{align} \left\{ {\begin{array}{*{20}ll} {{\pmb{\varepsilon }}_k = {\pmb{Z}}_k - {\hat{\pmb Z}}_{k/k - 1},} \\ {{\hat{\pmb R}}_k = \left( {1 - \displaystyle\frac{1}{k}} \right){\pmb{R}}_{k - 1} + \displaystyle\frac{1}{k}\left( {{\pmb{\varepsilon }}_k {\pmb{\varepsilon }}_k^{\rm T} - {\pmb{P}}_k } \right).} \\ \end{array}} \right. \end{align}$ | (23) |

To test the effectiveness of the proposed method,an autopilot with embedded system based on 2 pieces of high-speed DSPs and microcontroller were developed,to realize the complex algorithms of image processing,navigation and control,as shown in Fig. 2. In addition,it consists of a horizontal main board,housing 3-axis rate gyroscopes,3-axis accelerometers and a barometer. The gyro employs the LCG50 produced by System Donner Inertial Company in British. The accelerometer is the Model 1221 manufactured by Silicon Designs Company in Japan. Besides,the camera used here is a RICOR aerial camera,which is light (about 188g),with a fixed focal length about 6mm. In view of the time limit,we set the frame frequency at 5f/s. For flight stability,the navigation and control cycle are set at 50Hz. Besides,the data fusion method is conducted in 1Hz of data frequency and the coefficient $w$ is determined by a series of images of the landmark before landing.

|

Download:

|

| Fig. 2. The onboard system and the SUAR used in the experiment. | |

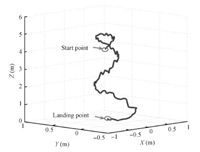

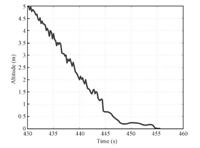

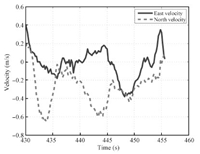

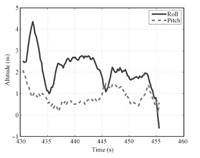

The landing pad used here is a 1.2m × 1.2m specially made landmark. The flight trajectory of the SUAR in the landing process is shown in Fig. 3. When the SUAR arrives above the landing area,it starts to search for the landmark and begin to land. With the constant adjustment for the planed hovering altitude,the SUAR descends the altitude with hovering station. When the altitude is less than 2m,the system reaches to the land disturbance region. Since there exists land disturbance,the pilot control model[20] is used to control the collective motor. The pilot control model provides a safe control mode for the SUAR. When the altitude is less than 0.5m shown in Fig. 4,the gun is decreased with constant speed. Thus,the lift force has been decreased correspondingly. Finally,the SUAR lands the ground at the (0.26,0.42,0). Figs.5 and 6 show the attitude and horizontal velocity in the landing process,respectively.

|

Download:

|

| Fig. 3. The 3D trajectory of the SUAR. | |

|

Download:

|

| Fig. 4. The vertical positioning results. | |

|

Download:

|

| Fig. 5. The attitude estimated during the landing process. | |

|

Download:

|

| Fig. 6. The velocities in two directions. | |

To further demonstrate the proposed method,several landing experiments have been conducted using the wavelet-adaptive KF and the EKF method used before.

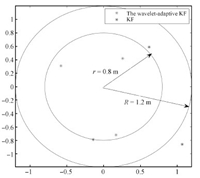

Fig. 7 shows the landing results using the wavelet-adaptive KF and the EKF,separately. The SUAR enters the landing mode roughly ten meters above the landing platform and then slowly descends until it has landed. The average Euclidean distances from the landing target are 63cm and 101cm,respectively.

|

Download:

|

| Fig. 7. The result of landing tests. | |

Focusing on the low measurement performance of the sensor for the low cost SUAR,a navigation method based on vision was proposed to improve the accuracy and reliability of the measurement information. The nonlinear optimization based on Newton iteration method for camera attitude and position was put forward to reduce the projection error and get an optimized solution. With the wavelet filter method,the high frequency noises in the SINS and vision have been eliminated effectively. By using the adaptive Kalman filter,the system fuses the output from SINS and vision to get high precision navigation information. Finally,the landing tests show that the compound navigation system based on the proposed method can provide high performance navigation information. With the high performance position and attitude information,the SUAR can realize stable autonomous landing based on the altitude information.

| [1] | Zhong Li-Na, Wang Jun-Hao, Wang Rong. Auto flight algorithm of quad-rotor helicopter based on magnetic sensor. Journal of Chongqing University of Technology (Natural Science), 2013, 27(12):86-90(in Chinese) |

| [2] | Jiang Bin, Sun Zhi-Feng. The realization of autonomous navigation for quadrotor aircraft. Electronic Technology, 2012, 39(2):10-12, 9(in Chinese) |

| [3] | Najib M, Tarek H. A UAR for bridge inspection:visual servoing control law with attitude limits. Automation in Construction, 2007, 17(1):3-10 |

| [4] | Weiss S, Scaramuzza D, Siegwart R. Monocular-SLAM-based navigation for autonomous micro helicopters in GPS-denied environments. Journal of Field Robotics, 2011, 28(6):854-874 |

| [5] | Wendel J, Meister O, Schlaile C, Trommer G F. An integrated GPS/MEMS-IMU navigation system for an autonomous helicopter. Aerospace Science and Technology, 2006, 10(6):527-533 |

| [6] | Shin E H, El-Sheimy N. Accuracy improvement of low cost INS/GPS for land applications. In:Proceedings of the 2002 U.S. Inst. Navigation, National Technical Meeting. San Diego, CA USA:ION NTM, 2002. 146-158 |

| [7] | Michael N, Fink J, Kumar V. Cooperative manipulation and transportation with aerial robots. Autonomous Robots, 2011, 30(1):73-86 |

| [8] | How J P, Bethke B, Frank A, Dale D, Vian J. Real-time indoor autonomous vehicle test environment. IEEE Control Systems Magazine, 2008, 28(2):51-64 |

| [9] | Saripalli S, Sukhatme G S, Montgomery J F. An Experimental Study of the Autonomous Helicopter Landing Problem-Experimental Robotics VIII. Berlin:Springer-Verlag, 2003. 466-475 |

| [10] | Hermansson J, Gising A, Skoglund M, Schön. Autonomous Landing of an Unmanned Aerial Vehicle, Technical Report, Automatic Control, Linkopings Universitet, Sweden, 2010. |

| [11] | Johnson A E, Montgomery J F, Matthies L. Vision guided landing of an autonomous helicopter in hazardous terrain. In:Proceedings of the 2005 IEEE International Conference on Robotics and Automation. Barcelona, Spain:IEEE, 2005. 3966-3971 |

| [12] | Yu Z Y, Nonami K, Shin J, Demian C. 3D vision based landing control of a small scale autonomous helicopter. International Journal of Advanced Robotic Systems, 2007, 4(1):51-56 |

| [13] | Press W H, Teukolsky S A, Vetterling W T, Flannery B P. Numerical Recipes in C:the Art of Scientific Computing (Second edition). Cambridge:Cambridge University Press, 1992. |

| [14] | Sun Yan-Yue, He Xiao-Hai, Song Hai-Ying, Chen Wei-Long. A blockmatching image registration algorithm for video super-resolution reconstruction. Acta Automatica Sinica, 2011, 37(1):37-43(in Chinese) |

| [15] | Wan Yu-Chai, Liu Xia-Bi, Han Fei-Fei, Tong Kun-Qi, Liu Yu. Online learning of binary classifiers for improving google image search results. Acta Automatica Sinica, 2014, 40(8):1699-1708 |

| [16] | Chong Yan-Wen, Kuang Hu-Lin, Li Qing-Quan. Two-stage pedestrian detection based on multiple features and machine learning. Acta Automatica Sinica, 2012, 38(3):375-381(in Chinese) |

| [17] | Hu M K. Visual pattern recognition by moment invariants. IRE Transactions on Information Theory, 1962, 8(2):179-187 |

| [18] | Latry C, Panem C, Dejean P. Cloud detection with SVM technique. In:Proceedings of the 2007 IEEE International Conference on Geoscience and Remote Sensing Symposium. Barcelona, Spain:IEEE, 2007. 448-451 |

| [19] | Cristiani N, Shawe-Taylor J. An Introduction to Support Vector Machines and Other Kernel-based Learning Methods. Cambridge:Cambridge University Press, 2000. |

| [20] | Lei Xu-Sheng, Fang Jian-Cheng, Bai Lang, etc. Autonomous Taking Off and Landing Technology for the Small Unmanned Rotorcraft Vehicle Based on the Pilot Model, C. N. Patent 102289714B, December 2011. (in Chinese) |

2015, Vol.2

2015, Vol.2