2. Institute of Intelligent Machines, Chinese Academy of Sciences, Hefei 230031, China

In recent years,research interest in unmanned aerial vehicles (UAVs) has grown rapidly. UAVs are widely applied in search-and-rescue,surveillance and exploration. UAVs can be classified into three categories,i.e.,rotary,fixed and flapping wing types. The quadrotor we use (see Fig. 1) is a micro aerial vehicle (MAV) and has four rotary wings. A quadrotor is usually equipped with many kinds of sensors,such as GPS,laser range finder,inertial measurement unit (IMU),sonar or air pressure sensors. It has distinct advantages in agility and maneuverability. However,a quadrotor usually has limited payload, power and computational resources. It also has unstable and fast dynamics which may result in a series of state estimation,sensing and control problems.

|

Download:

|

| Fig. 1. The quadrotor used in our experiments. | |

Researchers have been able to perform autonomous flight and navigation in outdoor environments by using GPS and inertial sensors[1, 2]. However,GPS is unavailable in indoor environments and most urban canyon. Up to now,there have been many algorithms developed for ground and underwater vehicles in GPS-denied environments. Because of the limited payload,power and computational resources,algorithms for quadrotors are still in development. Many researches have been carried out for autonomous flight and navigation in GPS-denied environments. External motion capture systems such as Vicon[3, 4] were employed in some works. Artificial markers were also applied to realize autonomous navigation[5]. These approaches limit the range of quadrotor and are not suitable.

By using laser range finder,excellent autonomous flight and navigation system was proposed in [6]. Some other researches performed the autonomous flight and navigation by using cameras and laser range finders[7, 8, 9, 10]. However,laser range finders are expensive and have additional weight and power requirements for quadrotors. In contrast,cameras are lightweight and consume less power. Besides,cameras can obtain plentiful information of the environments. Recently,vision-based algorithms have become popular.

For visual navigation,there are mainly two types of methods according to the number of cameras. One type is based on the stereo devices. Some visual navigation systems were presented based on stereo cameras[5, 11, 12]. The Kinect was also used in the indoor 3D exploration with a quadrotor[10]. However,stereo vision systems often have limited range due to the limited baseline. The calibration and matching of stereo systems are complex. They also have larger weight,size and power consumption compared with the monocular camera.

Visual navigation based on a monocular camera is another type of method. The monocular methods can also be divided into two types. The first is based on filtering approaches. Some monocular simultaneous localization and mapping (SLAM) algorithms were realized by using extended Kalman filter[4, 13, 14]. The second is based on keyframes[15, 16, 17]. For example,Klein et al.[16, 17] presented a real-time visual SLAM system named parallel tracking and mapping (PTAM) based on keyframes. Monocular algorithms usually lack the metric scale. More details about the two types were discussed in [18, 19]. The conclusion was that the keyframe approaches gave more accurate results per unit of computing time,while the filtering approaches had advantages in the systems with limited processing resources.

In spite of limited processing resources,some monocular visual navigation systems for UAVs were presented based on keyframe methods[20, 21, 22, 23] to obtain more accurate results. Many monocular visual navigation systems for quadrotors run on ground computers[20, 22],while some simple algorithms were performed by processors on the quadrotor[21, 23]. Blosch et al.[20] presented a pure vision approach for the quadrotor navigation based on PTAM. But this method needed to select the initial frames manually and lacked the metric scale. In order to obtain more accurate results with less computation time,people also make use of some other sensors,such as air pressure sensors and IMUs. Achtelik et al.[21] made use of an IMU and an air pressure sensor to estimate the metric scale and to realize the hovering of the quadrotor. The air pressure sensor was not suitable for indoor environments. Engel et al.[22] presented a visual navigation system based on keyframes employing a front camera,a down-looking camera and an IMU. So it was a kind of multi-camera method. By using a monocular camera and an IMU,Weiss et al.[23] presented the speed estimation algorithm based on inertial-optical flow, which could obtain the metric state estimation for quadrotors. This approach was prone to position drift. They only realized the hovering. Up to now,there have been no satisfied monocular visual navigation systems for quadrotors,which are still in development.

The motivation of our work is to present a multi-sensor fusion based monocular visual navigation solution for a quadrotor with an IMU and a monocular camera as its main sensors,considering the limited payload,power and computational resources. For the indoor environments and many parts of the urban canyon,we realize the autonomous visual navigation for a quadrotor in GPS-denied and markerless environments. In the research work on monocular visual navigation system,we solve some key problems such as the metric scale estimation,and develop some accurate and efficient algorithms.

For visual navigation,pose estimation is quite important. Pose estimation can be calculated from 2D-to-2D point correspondences[24, 25, 26]. Pose estimation can also be calculated from 3D-to-2D point correspondences. This problem is known as perspective-n-point (PnP) problem. PnP problem can be solved by two types of methods,linear non-iterative methods and iterative methods. Some linear non-iterative methods were presented in [27, 28, 29, 30, 31]. Lepetit et al.[31] proposed a non-iterative solution EPnP which had higher accuracy and lower computational complexity than other non-iterative methods. Lu et al.[32] presented a quite accurate iterative algorithm. Some researches of pose estimation from planar targets were also presented in [33, 34]. Assuming a vertical direction known from IMU,Kukelova et al.[35] presented new closed-form solutions to the absolute pose estimation from two 3D-2D correspondences. In visual navigation,the 3D-to-2D methods are more popular than the 2D-to-2D methods.

The contributions of this paper are summerized as follows. Firstly,a multi-sensor fusion based monocular visual navigation solution is presented for the platform with limited payload,power and computational resources. The system with an IMU,a sonar and a monocular down-looking camera as its main sensors is able to work well in GPS-denied and markerless environments. Secondly, different from keyframe-based systems[15, 16, 17, 20, 21, 22, 23],we construct our visual navigation system using keyframes and keypoints as well in each frame. Thirdly,based on the omni-directional flight characteristics of quadrotor,we give the rapid preliminary motion estimation by using camera and IMU data. A multi-level judgment rule is then presented for updating the keyframes or the keypoints. This is beneficial to hovering conditions and can reduce the error accumulation effectively. Furthermore,the common monocular visual systems lack the metric scale,and we solve the metric scale estimation problem combining a sonar sensor in the initialization of the navigation system. Finally,we present the novel IMU+3P pose estimation algorithm which uses three point correspondences and IMU data. This algorithm transforms the 6-DOF pose estimation problem into the 4-DOF problem and obtains more accurate results with less computation time.

Ⅱ. FRAMEWORK A. Hardware Structure of the Monocular Visual Navigation SystemThe quadrotor in this paper is named X450 produced by XAircraft corporation. The empty weight of X450 is about 650 g. The safety payload is about 500 g which involves the lithium polymer battery. So there is quite limited payload for onboard devices. Our quadrotor is mainly equipped with an ATmega2560 micro-controller,a CCD monocular camera,an MPU6050 IMU and a sonar sensor. The IMU consists of three-axis gyroscopes and three-axis acceleration sensors. The control algorithm is executed onboard and the vision algorithms are implemented on a ground computer. Images obtained by onboard camera are transferred to the ground computer by a 5.8 G wireless image transmission device. The selected results of sensors are transferred to the ground computer by ZigBee wireless transmission modules. Finally, the calculated results on the ground computer are transferred back to the quadrotor by ZigBee modules.

Comparing with other UAVs,our quadrotor is a micro aerial vehicle,which has limited payload,power and computational resources. Although the vision algorithms are now performed on the ground computer,we are developing the real onboard vision algorithms. In the future,the visual navigation system will be implemented on the high-performance embedded device on the quadrotor.

B. Framework of The Monocular Visual Navigation SystemHere we introduce the framework of the monocular visual navigation system. A brief illustration of the framework can be seen in Fig. 2. In our visual navigation,only unknown natural features are employed. We do not make use of any known landmarks.

|

Download:

|

| Fig. 2. Framework of the monocular visual navigation system. | |

1) Keyframes and keypoints: Different from most of the keyframe-based systems,the visual navigation system in this paper is based on both keyframes and keypoints in each frame. The keyframes and keypoints make up the map. The keyframes are the nodes in the map. We store the features,the 3D positions of features and the pose estimation of the quadrotor for each keyframe.

Keypoints are a set of good feature points between keyframes. When the quadrotor flies between the adjacent keyframes,we mainly use keypoints to calculate the motion estimation. These keypoints are obtained from the results of frames matching. We choose the points whose Euclidean distances of correspondences are less than a certain threshold as the keypoints. These keypoints can be recovered to have 3D position information by triangulation. When new frame comes,we update the keypoints. The keypoints can be seen as good landmarks between keyframes. These keypoints are stored between keyframes. When new keyframe comes,we will store the features,the 3D coordinates of features,the motion estimation of the quadrotor,and remove the previous keypoints.

2) Monocular visual navigation system: In this paper,we present a multi-sensor fusion based monocular visual navigation system for the quadrotor with limited payload, power and computational resources. The system with an IMU,a sonar and a monocular down-looking camera as its main sensors is able to work well in GPS-denied and markerless environments. This visual navigation system is based on both keyframes and keypoints. GPU-based SURF is applied to feature detection and feature matching between frames. Utilizing the omni-directional flight characteristics of the quadrotor,we propose the rapid preliminary motion estimation by using camera and IMU data. A multi-level judgment rule is then presented for updating the keyframes or the keypoints selectively. This is beneficial to hovering conditions. In the initialization of the navigation system,we perform matching among three sequence frames. The general monocular visual systems usually lack the metric scale. By using sonar data,the metric scale estimation problem is solved. In order to get accurate motion estimation with less computation time,we present a novel IMU+3P algorithm which uses three point correspondences and IMU data. This algorithm transforms the 6-DOF pose estimation problem into the 4-DOF problem and is able to obtain more accurate results. In this framework,motion estimations between the newest two adjacent frames are performed at each new frame arrival. We will also perform the motion estimation between the new frame and the nearest keyframe every other frame. When the translation from the nearest keyframe is quite large,we will also add the new frame as a new keyframe. Local bundle adjustment and some measures are made to reduce the error accumulation and optimize the results. Local bundle adjustment is implemented every other $m$ frames when the estimation with the nearest keyframe is not performed.

Ⅲ. MONOCULAR VISUAL NAVIGATION SYSTEM A. Feature Selection And MatchingIn this paper,we perform visual navigation only depending on natural features. We do not use the known artificial landmarks. In recent years,some excellent feature detectors and descriptors,such as features from accelerated segment test (FAST)[36],adaptive and generic accelerated segment test (AGAST)[37] and binary robust independent elementary features (BRIEF)[38],have been presented. These algorithms can perform rapidly and have good results. But they usually have lower accuracy than scale invariant feature transform (SIFT)[39] and SURF[40]. SURF has excellent properties in repeatability, scale invariance and rotation invariance. In this paper,we perform a GPU-based SURF algorithm which has good results and completely meets our real-time requirement.

In order to obtain point correspondences,we perform feature matching. Some other works[5, 16, 17, 20, 21] used features tracking approach to get point correspondences. Quadrotor has high agility and maneuverability,so the tracking approach is unsuitable for our work. In our visual navigation system,we use feature matching method to get point correspondences.

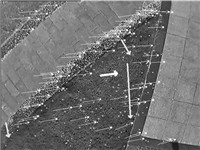

An illustration of feature matching between two real image frames can be seen in Fig. 3. In order to obtain accurate results with less computation time,some measures have been implemented. We adjust the threshold to set the number of original detected features at a low level. We only select at most 300 features for the following processing. We use K-d tree to speed up feature matching. At the same time,we only select these point correspondences whose ratio of the nearest distance to the second nearest distance is smaller than 0.8. When the quadrotor is flying,the image frames may have some texture regions and motion blur. The transmission from the quadrotor to the ground computer will also lower the image quality. Because of these reasons,there are some features which might match more than one feature in the other image. Only the pair of points which have each other as a preferred match are considered as correct correspondences.

|

Download:

|

| Fig. 3. Real matching results using GPU-based SURF. | |

In Fig. 3,these images are gathered by the flying quadrotor and then transmitted to the ground computer in real outdoor environments. There are mainly grass and path from which good features usually cannot be extracted. The real images are in low quality and have some blur. But from Fig. 3,we can see that our matching method still gets good matching results.

B. Preliminary Motion Estimation in Pixels1) Voting algorithm for preliminary translation estimation in pixels: The image matching mentioned above provides us with results similar to sparse optical flow algorithms. Each point correspondence can give the motion estimation in pixels. By using all of the feature correspondences,we have the global initial motion estimation in pixels. Here we use a voting algorithm to get the preliminary translation estimation (see Fig. 4). The preliminary translation estimation is figured by large-sized arrow in the center. The small-sized arrows denote the translation between right feature matching points. By using the preliminary translation estimation,we also reject some wrong feature matching points. These are figured by medium-sized arrows and their directions are obviously. different from others. Then we select the matching points whose Euclidean distances are less than a certain threshold as the keypoints. The preliminary translation estimation is decomposed to $X_Q$ axis and $Y_Q$ axis. Then we denote the preliminary translation estimation in pixels as $x_{p0}$,$y_{p0}$ for $X_Q$ axis and $Y_Q$ axis,respectively.

|

Download:

|

| Fig. 4. The preliminary translation estimation in pixels by voting algorithm. | |

2) The consistency problem of image data,sonar data and IMU data: At first,we talk about the consistency problem of image data,sonar data and IMU data. Strictly speaking,image data,sonar data and IMU data may not arrive at the ground computer at the same time. Also they may not be gathered at the same time. By real experiments,the total delays of IMU,sonar,and image data are respectively about 15 ~25 ms,45 ms,and 40 ~ 80 ms,respectively.

On the average,the corresponding image data arriving at ground computer is about 40 ms later than IMU data and 15 ms later than sonar data. To solve this problem,the past IMU data and sonar data are stored in the computer. When we use image,sonar,and IMU data for motion estimation,we combine the image data with the corresponding IMU data which arrives 40 ms before. Meanwhile,we use the sonar data which arrived just before the current one.

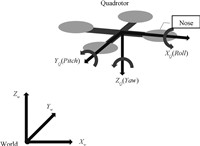

3) Introduction of the coordinate systems: A brief illustration of the world coordinate system and the quadrotor coordinate system can be seen in Fig. 5. The world coordinate system is on the ground. The quadrotor coordinate system is shown in Fig. 5. $X_Q$ axis is the nose direction of quadrotor. Here roll angle denotes the rotation about $X_Q$ axis, pitch angle denotes the rotation about $Y_Q$ axis,and yaw angle denotes the rotation about $Z_Q$ axis.

|

Download:

|

| Fig. 5. The quadrotor coordinate system and the world coordinate system. | |

4) The refined preliminary translation estimation: The above translation estimation $x_{p0}$,$y_{p0}$ in pixels is obtained when the quadrotor may have tilt angle with vertical direction. For real translation estimation in pixels,we need to refine the preliminary results of translation estimation.

Quadrotor has wonderful characteristic of omni-directional flying. Usually the nose direction of the quadrotor changes little during flying. That is to say that the yaw angle of the quadrotor changes little between two adjacent frames. Assume that the yaw angles between two adjacent frames are the same,we need not consider the variance of yaw. For this reason,we use IMU data to refine the preliminary translation estimation and have the rough and preliminary motion estimation in pixels.

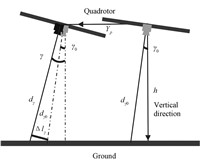

A brief illustration can be seen in Fig. 6. The IMU provide us with roll angle $\gamma$ and pitch angle $\beta$ of the quadrotor. Here we first talk about the roll angle situation,and the pitch angle situation is similar. Here $d_{\gamma0}$ denotes the distance between the quadrotor and the scene in last frame in the case of inclined roll angle. $d_{\gamma}$ denotes the distance between the quadrotor and the scene in current frame in the case of inclined roll angle. $\gamma_{0}$ and $\gamma$ are the roll angles obtained by IMU for the last frame and the current frame.

|

Download:

|

| Fig. 6. An illustration for the refined preliminary motion estimation in pixels. | |

The real tilt angle $\varphi$ of the quadrotor can be calculated from the roll angle $\gamma$ and the pitch angle $\beta$ with the vertical direction known. The real distance obtained by the sonar sensor is $d$ in the current frame. Then we have

| $ h = d \cdot \cos \varphi = {d_\gamma } \cdot \cos \gamma = {d_{{\gamma _0}}} \cdot \cos {\gamma _0}, $ | (1) |

where $h$ is the real height of the quadrotor. From (1), we have

| $ {d_\gamma } = d \cdot {\frac {\cos \varphi}{\cos \gamma}}, $ | (2) |

| $ {d_{{\gamma _0}}} = d \cdot {\frac{\cos \varphi}{\cos {\gamma _0}}}. $ | (3) |

Then the translation $\Delta {l_\gamma }$ resulted by rotation variation of a roll angle can be obtained by

| $ \Delta {l_\gamma } = {d_{{\gamma _0}}} \cdot \sin (\gamma - {\gamma _0}). $ | (4) |

In this paper,the intrinsic parameters of the camera are known in advance. The focal length of the camera in pixels is $f$. Then we have

| $ \Delta {l_{\gamma p}} = {d_{{\gamma _0}}} \cdot \sin (\gamma - {\gamma _0}) \cdot{\frac {f}{{d_{{\gamma _0}}}}} = \sin (\gamma - {\gamma _0}) \cdot f, $ | (5) |

where $\Delta {l_{\gamma p}}$ denotes the translation in pixels caused by the rotation variation of roll angle. $\Delta {l_{\gamma p}}$ affects the translation results of the quadrotor in $Y_Q$ axis. Then the refined translation estimation $y_p$ of $Y_Q$ axis in pixels is

| $ {y_p} = \frac{{y_{p0}} - \Delta {l_{\gamma p}}}{\cos \gamma} = \frac{{y_{p0}} - \sin (\gamma - {\gamma _0}) \cdot f}{\cos \gamma}. $ | (6) |

For the pitch angle,the calculation is similar to that of the roll angle. So $d_\beta$ and $d_{\beta _0}$ are

| $ {d_\beta } = \frac{{d_\gamma }}{\cos \beta}, $ | (7) |

| $ {d_{{\beta _0}}} = \frac{{d_\gamma }}{\cos {\beta _0}}. $ | (8) |

We also get $\Delta {l_{\beta}}$ and $\Delta {l_{\beta p}}$ which are caused by the rotation variation of pitch angle as

| $ \Delta {l_\beta } = {d_{{\beta _0}}} \cdot \sin (\beta - {\beta _0}), $ | (9) |

| $ \Delta {l_{\beta p}} = {d_{{\beta _0}}} \cdot \sin (\beta - {\beta _0}) \cdot \frac{f}{{d_{{\beta _0}}}} = \sin (\beta - {\beta _0}) \cdot f. $ | (10) |

The $\Delta {l_{\beta p}}$ will affect the translation results of the quadrotor in $X_Q$ axis. Then we get the refined translation estimation $x_p$ of the $X_Q$ axis in pixels,i.e.,

| $ {x_p} = \frac{{x_{p0}} - \Delta {l_{\beta p}}}{\cos \beta} = \frac{{x_{p0}} - \sin (\beta - {\beta _0}) \cdot f}{\cos \beta}. $ | (11) |

Furthermore,we can also obtain the refined translation estimations of $X_p$ and $Y_p$ in metric scale,which are

| $ {X_p} = - {d_{{\beta _0}}} \cdot \sin (\beta - {\beta _0}) + {x_{p0}} \cdot \frac{{d_\beta }}{f \cdot \cos \beta }, $ | (12) |

| $ {Y_p} = - {d_{{\gamma _0}}} \cdot \sin (\gamma - {\gamma _0}) + {y_{p0}} \cdot \frac{{d_\gamma }}{f \cdot \cos \gamma }. $ | (13) |

In fact,the refined translation estimation $X_p$ and $Y_p$ may not be accurate. Because when there are obstacles or the ground which is not smooth,the sonar data may not be correct. But the refined translation estimation $x_p$ and $y_p$ in pixels are accurate.

C. Initialization Problem for Visual Navigation SystemFor monocular visual system,the initial motion estimation is quite important. Generally,the results of monocular visual systems lack metric scale. They usually get the results of relative scale. So the accurate initial motion estimation will be beneficial to the following motion estimations and visual navigation system.

In the initialization step,the quadrotor flies smoothly and steadily. The navigation system matches three sequent frames until the suitable keyframes appear. The suitable keyframes should meet the requirements that the refined translation estimation $x_p$ and $y_p$ in pixels between the first two keyframes are in a certain range. And the refined translation estimation $x_p$ and $y_p$ in pixels between last two keyframes should also be in a certain range. Then we will select the first keyframe and the third keyframe as our initial keyframes. The place where the quadrotor gets the first keyframe is set as the origin position. The point correspondences are also between the first keyframe and the third keyframe.

Here we use the 2D-to-2D relative pose estimation algorithm presented in [26]. It provides accurate relative motion estimation using IMU data. After calculating the essential matrix between the first and the third keyframes,we get the relative motion estimation of translation and rotation. The result of relative motion estimation lacks metric scale. Here we use sonar data to recover the absolute scale. When relative motion estimation is calculated,we triangulate the feature points matched between the first and the third keyframes. Then we will get 3D positions $(X_i^s,Y_i^s,Z_i^s)~(i = 1,2,...,n)$ of the feature points on a certain scale in the coordinate system of the current quadrotor. The average $Z_{ave}$ of all these 3D points is

| $ {Z_{ave}}\begin{array}{*{20}{c}} { = \frac{{{{\left\| {(Z_1^s,Z_2^s,\cdots ,Z_n^s)} \right\|}_1}}}{n}.} \end{array} $ | (14) |

And the real current distance obtained by sonar data is $d$. Assumed that most of the features are on the ground plane,we have the following relationships:

| $ d = k \cdot {Z_{ ave}}, $ | (15) |

| $ {h_{ave}} = d \cdot \cos \beta = k \cdot {Z_{ ave}} \cdot \cos \beta, $ | (16) |

where $k$ is the correction factor,and $h_{ave}$ is the real average height of these 3D feature points along vertical direction. And $({X_i},{Y_i},{Z_i}) = (kX_i^s,kY_i^s,kZ_i^s)~(i = 1,2,...,n)$ are the real 3D positions of these feature points. These feature points with real 3D positions can be seen as natural landmarks.

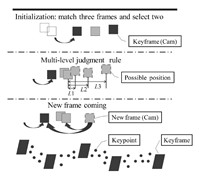

D. Multi-level Judgment RuleWhen a new frame comes,the feature matching is performed between the new and the previous frames. The original motion estimation in pixels can be obtained. It is figured in large-sized arrow (see Fig. 3). The refined translation estimation $x_p$ and $y_p$ in pixels are also calculated. Then the refined total translation $L_p$ of $x_p$ and $y_p$ can be obtained as

| $ {L_p} = {\left\| {({x_p},{y_p})} \right\|_2}. $ | (17) |

A multi-level judgment rule based on $L_p$ is given here (see Fig. 2).

1) When $0 \le {L_p} \le {L_1}$,the pose between adjacent frames changes slightly. At this moment,the quadrotor is hovering,and we need not to perform the accurate motion estimation. Of course, we may calculate the motion estimation using some constant keypoints. At this time,we do not update any keypoint or keyframe. This can reduce the error accumulation effectively when pose varies slightly and is beneficial to hovering conditions.

2) When ${L_1} < {L_p} \le {L_2}$,the pose between adjacent frames changes a little. There is obvious movement of the quadrotor. The accurate motion estimation is performed using point correspondences. And the keypoints are updated and employed when the next frame comes.

3) When ${L_2} < {L_p} \le {L_3}$,the pose between adjacent frames changes a lot. The accurate motion estimation should be performed by using point correspondences. It is time that we should update the keyframes.

4) When ${L_3} < {L_p}$,the pose changes quite a lot. Generally, this situation seldom occurs. There may not be enough matching regions between the adjacent frames. So we set the current frame as a new keyframe. The following frame will be matched with this new original keyframe. Until the previous keyframes or enough keypoints are observed again,we combine the results with the previous results.

When the total pose variation of a few frames is large,we need to update the keyframes and add the new frame as a new keyframe. The motion estimation between the new frame and the nearest keyframe is made every other frame. When the translation with the nearest keyframe is large,we will also update the keyframes and add the new frame as a new keyframe.

E. Accurate Motion EstimationThe accurate motion estimation can be calculated by using previous results of feature point correspondences. For monocular systems, there are two types of approaches. One is 2D-to-2D approach,and the other one is 3D-to-2D approach. Here we use the latter one, because the former needs more point correspondences with more computation time and has higher error accumulation for long-term navigation. We present an IMU+3P pose estimation algorithm which uses three 3D-to-2D point correspondences and IMU data. When a new frame arrives,we have the accurate pose estimation. In fact,we also get the accurate motion estimation.

1) Problem formulation: When the quadrotor is flying in the sky,the onboard camera observes the ground scene. The keypoints with 3D positions are obtained by triangulating and denoted as $M_i^w = (X_i^w,Y_i^w,Z_i^w)'~(i = 1,2,...,n)$ in the world coordinate system. And their corresponding coordinates in the camera coordinate system are $M_i^c = (X_i^c,Y_i^c,Z_i^c)'~(i = 1,2,...,n)$. A rigid transformation is obtained as follows:

| $ M_i^c = R \cdot M_i^w + T, $ | (18) |

where $R = f(\alpha ,\beta ,\gamma )$ and $T = ({t_x},{t_y},{t_z})'$ are the rotation matrix and translation vector. The intrinsic parameters of the camera are known in advance. So the image coordinates of the keypoints in pixels $m_i^p = (u_i^p,v_i^p)'~(i = 1,2,...,n)$ can be transformed into the normalized image coordinates ${m_i^n} = ({u_i},{v_i})'~(i = 1,2,...,n)$. Here we employ the homogeneous coordinates ${m_i} = ({u_i},{v_i},1)'~(i = 1,2,...,n)$ transformed from the normalized image coordinates ${m_i^n} = ({u_i},{v_i})'~(i = 1,2,...,n)$. They have the following relationship:

| $ \lambda {m_i} = M_i^c = R \cdot M_i^w + T, $ | (19) |

where $\lambda$ is scale factor. Here $M_i^w$ and $m_i$ make up a pair of 3D-to-2D point correspondence. We need to calculate the rotation matrix $R$ and the translation vector $T$ by a set of 3D-to-2D point correspondences.

2) Measures for accurate motion estimation: For accurate motion estimation,some measures should be taken. By using feature matching algorithm,a set of feature matching results are obtained. The current keypoints are also obtained. Usually the number of keypoints is larger than the number required by IMU+3P algorithm. Also there may be some outliers in these feature matching results. Although we reject some outliers by voting algorithm in the previous section,there may still be some outliers remained.

In order to obtain good keypoints,we first order these keypoints according to the Euclidean distance of their feature correspondences. We denote this ordered set of feature correspondences as $P = \{ {p_1},{p_2},\cdots,{p_n}\}$. For the IMU+3P algorithm,we select three correspondences from the set each time,which meet the following rules:

| $\begin{array}{l} \left( {{p_{{a_1}}},{p_{{b_1}}},{p_{{c_1}}}} \right) < \left( {{p_{{a_2}}},{p_{{b_2}}},{p_{{c_2}}}} \right),\\ \begin{array}{*{20}{l}} {[({a_1} + {b_1}) \le ({a_2} + {b_2}) \cap {c_1} \le {c_2}]\; \cap \;{a_1} < {b_1} < {c_1} \cup \;}\\ {\qquad [{a_1} \le {a_2} \cap ({b_1} + {c_1}) \le ({b_2} + {c_2})]\; \cap \;{a_2} < {b_2} < {c_2}.} \end{array} \end{array}$ | (20) |

A typical sequence is $({p_1},{p_2},{p_3})$, $({p_1},{p_2},{p_4})$,$({p_1},{p_3},{p_4})$, $({p_1},{p_2},{p_5})$,$({p_1},{p_3},{p_5})$, $({p_2},{p_3},{p_4})$,$({p_1},{p_2},{p_6})$, $({p_2},{p_3},{p_5})$,$({p_2},{p_3},{p_6})$. For the purpose of accurate estimation and outlier elimination,we perform random sample consensus (RANSAC) for the IMU+3P algorithm. The RANSAC algorithm is performed according to the typical sequence.

3) The IMU+3P algorithm: A good way for getting more accurate results with less computation time is to use the IMU sensor for pose estimation. The minimal number of point correspondences for the absolute pose estimation problem is two with known roll and pitch angles[35]. But the two-correspondence method may have higher translation error. In this paper,we present a novel IMU+3P pose estimation algorithm which uses three 3D-to-2D point correspondences and IMU data. By using IMU data,the 6-DOF pose estimation problem is transformed into the 4-DOF problem. The accuracy of our IMU is about 0.3 degree. This is a relatively good accuracy,while other general pure vision algorithms are difficult to reach such a level. Given two accurate angles by IMU,the remaining 4-DOF problem can obtain more accurate results with less computation time.

For the quadrotors,the rotation matrix $R$ should be expressed following the default rule in aviation which is different from that in [35]. In our work,rotation matrix $R$ is expressed as

| $ R = {R_z}{R_y}{R_x}, $ | (21) |

where $R_z$ is the rotation matrix for the yaw angle, $R_y$ is the rotation matrix for the pitch angle and $R_x$ is the rotation matrix for the roll angle. In our work,the IMU provides us with the roll and pitch angles. So $R_x$ and $R_y$ are known to us. Then the unknown $R_z$ is

| $ {R_z} = \left[{\begin{array}{*{20}{c}} {\cos \alpha } & { - \sin \alpha } & 0 \\ {\sin \alpha } & {\cos \alpha } & 0 \\ 0 & 0 & 1 \\ \end{array}} \right], $ | (22) |

where $\alpha$ is the yaw angle. Thus,(19) can be written as

| $ \lambda {m_i} = \left[{{R_z}(\alpha ){R_y}{R_x}|T} \right]{M_i}, $ | (23) |

where $M_i$ is homogeneous coordinates of 3D points. For image points,we use the homogeneous normalized image coordinates ${m_i}$ here. The substitution $q = \tan (\alpha /2)$ can be used to simplify (22) as

| $ (1 + {q^2}){R_z}(q) = \left[{\begin{array}{*{20}{c}} {1 - {q^2}} & { - 2q} & 0 \\ {2q} & {1 - {q^2}} & 0 \\ 0 & 0 & {1 + {q^2}} \\ \end{array}} \right]. $ | (24) |

Then (23) can be written as

| $ {\left[{{m_i}} \right]_ \times }\left[{{R_z}(q){R_y}{R_x}|T} \right]{M_i} = 0, $ | (25) |

where ${[{m_i}]_ \times }$ is the skew symmetric matrix of $m_i$ and the rank of ${[{m_i}]_ \times }$ is two. Equation (25) produces three polynomial equations and only two are linearly independent. In this case,there are four unknown variables $q$, $t_x$,$t_y$ and $t_z$. In theory,two point correspondences can solve this problem. We name this algorithm as IMU+2P algorithm. But only two correspondences may result in higher translation error in our real experiments. So we make use of three point correspondences to calculate the pose estimation. By using least squares method,we get the final pose estimation. We name this algorithm as IMU+3P algorithm.

F. Measures for Reducing Error AccumulationFor visual navigation system,the error accumulation affects the accuracy of system seriously. Common with other keyframe-based navigation systems,the local bundle adjustment is performed on the nearest $n$ keyframes in this paper. Local bundle adjustment can optimize the poses and parameters.

In addition,some other measures for reducing error accumulation are also performed in this paper. When the quadrotor is hovering or moves slightly,the keypoints will be made full use of. When the quadrotor moves slightly,we will not update any keypoints or keyframes. We use some constant keypoints to get the motion estimation until the quadrotor moves significantly. This measure can reduce the error accumulation effectively. For accurate motion estimation,we triangulate the keypoints and set them as 3D natural landmark. This measure links the quadrotor and the scenes. Cases with large error will be found easily and be eliminated.

When there are no obstacles and the ground is smooth,sonar data can be used to refine the results of pose estimation and the 3D position of triangulated keypoints. But considering the general situations,the sonar data is only used in the initialization of the visual navigation system in this paper.

Ⅳ. EXPERIMENTS AND RESULTSIn this section,we first show the robustness and accuracy of IMU+2P and IMU+3P algorithms in simulation experiments. After discussing these methods,the reason why we select the IMU+3P algorithm is given. We perform our monocular visual navigation system in real indoor and outdoor environments. We demonstrate the property of our system in real experiments. The angular accuracy of our IMU is about 0.3 degree,while the accuracy of usual low cost IMUs is about 0.5 degree. All these programs are written in C/C++. The control algorithm is performed onboard and the vision algorithms are implemented on a ground computer which has two i5 CPUs and the GTX 650 graphics card.

A. Simulation Experiments for IMU+3PIn these simulation experiments,we evaluate the robustness and accuracy of the IMU+2P and IMU+3P algorithms with different image noises and IMU noises. Here the 3D points were generated randomly with the Gaussian distributions on a near-plane scene. The number of 3D points was 200. A calibrated virtual camera was also generated to look at the scene. In order to improve the reliability of simulation experiment,we used the intrinsic parameters of the real camera as the intrinsic parameters of the virtual camera. At this moment,the translation and rotation between the quadrotor and the scene were known to us. We set these values as the ground-truth translation and rotation. Each 3D point was projected by the virtual camera. We generated different Gaussian random noises to simulate different image noises and IMU noises. Image noises were added to the image coordinates obtained by the virtual camera. IMU noises were added to the rotation matrix. The translation error was described by the angle between the estimated translation direction and the ground-truth direction. The rotation error was described by the smallest angle of rotation which brought the estimated rotation to the ground-truth rotation.

We compared IMU+3P with IMU+2P,GAO[30],LHM[32] and EPnP[31] algorithms. IMU+3P and IMU+2P respectively employed three and two point correspondences every time. Here GAO,LHM and EPnP are all pure visual pose estimation algorithms and make no use of IMU data. GAO,LHM and EPnP employed four point correspondences every time. For each calculation,we performed the RANSAC algorithm based on the 200 points. These algorithms were tested with different image noise parameters and IMU noise parameters respectively. For each experiment with a certain parameter,we performed the calculation 300 times and chose the median value as our result.

1) Pose error with different image noises: Here we set the IMU noise to be the constant value of 0.5 degree and compared these algorithms with different image noises.

Fig. 7 (a) shows the translation error with different image noises. The iterative algorithm LHM has the highest translation accuracy,while IMU+2P has the lowest accuracy. Here the accuracy of IMU+3P is quite good. The results of IMU+3P are close to the excellent non-iterative algorithm EPnP which makes use of four point correspondences.

|

Download:

|

| Fig. 7. Translation error and rotation error with different image noises. | |

Fig. 7 (b) shows the rotation error with different image noises. Here IMU+2P and IMU+3P have quite good accuracy in rotation estimation. GAO,LHM,EPnP which make no use of IMU data are all inferior to IMU+2P and IMU+3P. Besides,IMU+3P has slightly lower rotation accuracy than IMU+2P.

We set the IMU noise to be the constant value of 0.5 degree here. From Fig. 7,we find that IMU+3P has good results of both translation and rotation with different image noises. In fact,the accuracy of our IMU is about 0.3 degree which is less than 0.5 degree. Hence,better results could be obtained by using our IMU.

2) Pose error with different IMU noises: It should be noted that the errors of GAO,LHM and EPnP algorithms were constant values in Fig. 8. They did not change with different IMU noises,because these methods made no use of IMU data and were shown here only for comparison. Here we set the image noise to be the constant value of 1.0 pixel for all the algorithms,and show the results of IMU+3P and IMU+2P with different IMU noises.

|

Download:

|

| Fig. 8. Translation error and rotation error with different IMU noises. | |

Fig. 8 (a) shows the translation error with different IMU noises. The translation error of IMU+2P is the highest one among these algorithms. When IMU noise is less than 1.0 degree,the IMU+3P has good translation results. When IMU noise is greater than 1.0 degree,IMU+3P has relatively large translation error.

Fig. 8 (b) shows the rotation error with different IMU noises. Here IMU+2P and IMU+3P have higher accuracy in rotation estimation than GAO,LHM and EPnP. When IMU noise is small,IMU+2P and IMU+3P have excellent rotation estimation results. IMU+2P has slightly better accuracy than IMU+3P.

From Fig. 8 (a),we find that IMU+3P algorithm has good translation results when IMU noise is less than 1.0 degree. In Fig. 8 (a),IMU+2P and IMU+3P both have good rotation results when IMU noise is less than 1.8 degree. Usually the smaller IMU noise is, the better the results of translation and rotation will be. In this paper,the angular accuracy of our IMU is about 0.3 degree and quite meets the requirements of algorithms.

3) Discussion: From Fig. 7,we see that IMU+3P has good results of both translation and rotation with different image noises,while IMU+2P has bad results of translation. In Fig. 8,IMU+3P also has good results of both translation and rotation when IMU noise is less than 1.0 degree,while IMU+2P has bad results of translation. The difference of rotation results between IMU+3P and IMU+2P is slight. The increment on computation time for IMU+3P is little and still within our computation ability. Besides,GAO,EPnP and LHM all have worse results of rotation and longer computation time than IMU+3P. So in this paper,we choose IMU+3P as our motion estimation algorithm in terms of the trade-off between the accuracy of translation and rotation.

Generally speaking,the number of point correspondences has a significant influence on the accuracy of pose estimation. When the number of point correspondences is in a certain range,the more points are used,the higher accuracy will be obtained. GAO,EPnP and LHM all make use of four point correspondences,while IMU+3P and IMU+2P respectively employ three and two. So GAO,EPnP and LHM have higher translation accuracy than IMU+3P and IMU+2P,but have lower rotation accuracy. This phenomenon can be explained as follows. IMU+3P and IMU+2P directly get the roll angle and the pitch angle from the highly accurate IMU data. The accuracy of our IMU is about 0.3 degree,which general pure vision algorithms are difficult to reach. Given two accurate angles by the IMU,the remaining 4-DOF problem can be calculated more rapidly and the calculated yaw angle will be more accurate.

IMU+3P utilizes more point correspondences than IMU+2P,therefore, has higher translation accuracy. When the number of point correspondences is quite small,the error of each point correspondence greatly affects the results. So the more point correspondences may disturb the accuracy of rotation estimation especially when there is original,highly accurate IMU data. Hence,the rotation accuracy of IMU+3P is slightly lower than IMU+2P.

B. Real Experiments of Visual Navigation in Indoor EnvironmentsWe also tested the IMU+3P in real experiments. In real experiments,the position error of IMU+3P was less than 2 cm. And the rotation error of IMU+3P was below 0.5 degree. The accuracy of IMU+3P was good and met the requirements of our visual navigation system. The total time cost of the process by our visual navigation system was about 120 ms for every new frame. It met the real-time requirements for UAV applications.

In real indoor environments,the monocular visual navigation system was performed. In indoor experiments,we placed an industrial camera on the ground to get the ground-truth translation of the quadrotor. This motion capture system can achieve the accuracy of millimetre level. The ground-truth rotation was obtained by using IMU data and electronic compass. The experiment field was about 3 m$\times$3 m. We placed some pictures on the ground to enrich the features. The height of the quadrotor was about 2 ~2.5 m indoors.

1) The results of real indoor experiments: The results of real visual navigation experiment are shown in Fig. 9. The ground-truth pose obtained by the external industrial camera is figured in chain line. Each triangle denotes the localization results of the quadrotor at a certain time of the navigation process. So all of the triangles denote the entire visual navigation trajectory of the quadrotor. From the navigation trajectory,we find that our visual navigation system can get robust and accurate navigation results. In order to show the details clearly,a small part of the results is particularly shown in Fig. 9 (b).

|

Download:

|

| Fig. 9. The real visual navigation experiments in indoor environments (The entire navigation trajectory is shown in (a), and a part of the entire trajectory is figured in (b) for showing the details clearly.) | |

2) Pose error in real indoor experiments: Fig. 9 (a) shows the ground-truth trajectory gained by both external industrial camera and the localization results calculated by our visual navigation system. The error of each localization result was calculated with the corresponding ground-truth result. At the initial phase of the experiments,the pose error was low. When the quadrotor flew for a long time,the pose error would become larger because of error accumulation. We should see that the error was in a quite limited range during the entire navigation process. In this experiment,the quadrotor did not fly along a loop. Because in that case,the loop closure detection would reduce the error accumulation effectively. Here we intended to show the error accumulation situation and analyze the accuracy of our visual navigation system. The length of the trajectory in indoor experiment was about 5 m. At the end,the translation error was about 11.6 cm. The total translation error was about 2.32 %. The total rotation error was below 1.0 degree. We have proved that the visual navigation system has quite good accuracy in real experiments indoors.

C. Real Experiments of Visual Navigation in Outdoor EnvironmentsWe performed the monocular visual navigation system in real outdoor environments. In outdoor environments,we could not get effective ground-truth when the quadrotor flew in large-scale environments. Here we placed several artificial landmarks at the waypoints. The poses of the landmarks were known in advance.

1) Real experiments in outdoor environments: The results of real visual navigation outdoors are shown in Fig. 10. We figure the trajectory of the quadrotor in triangles. The experiment field was about 3 m$\times$10 m in outdoor environments. The height of the quadrotor was about 2~3 m. From Fig. 10,we see that the monocular visual navigation gets good navigation results in outdoor environments.

|

Download:

|

| Fig. 10. The real visual navigation experiments in outdoor environments (Some real flight scenes are shown on the left, and the real navigation trajectory is figured on the right.) | |

2) Pose error in real experiment outdoors: In this outdoor experiment,the length of the navigation trajectory was about 15 m in Fig. 10. We placed several landmarks at some waypoints. We compared the results of our visual navigation system with the results from known landmarks. In this experiment the quadrotor did not fly along a loop. Because in that case,the loop closure detection would reduce the error accumulation effectively. Here we only wanted to show the error accumulation situation and analyze the accuracy of our visual navigation system. Pose errors obtained in the middle and at the end of the trajectory were shown here. In the middle,the length of the trajectory was about 8 m. The translation error was about 29.8 cm,and the total rotation error was below 1.2 degree. The total translation error was about 3.73% in the middle of the navigation process. At the end of the entire experiment,the translation error was about 68.5 cm. The total rotation error was below 1.4 degree. The total translation error was about 4.57%. These results demonstrate that our visual navigation system can restrain error accumulation effectively and has excellent results of visual navigation.

D. Discussion on Real ExperimentsOur monocular visual navigation system could get quite good navigation results for both indoor and outdoor experiment. The total translation error of indoor experiments was about 2.32% which was lower than that of outdoor experiment. The total rotation error of indoor experiment was also lower. These cases may be resulted in by several reasons. The ground in indoor environments was usually quite smooth,while the ground outdoors might not be smooth. The sonar data was used to initialize the entire visual navigation system and got the accurate initial pose. So the initial pose of indoor experiment was usually more accurate than that of outdoor experiment. Besides,the outdoor environments might lack good and enough features on the ground. This would also lower the accuracy of the real outdoor experiment. In the end,the trajectory of outdoor experiment was longer than that of indoor experiment. The outdoor visual navigation experiment was usually performed in a large region. This would lower the accuracy compared with indoor environments. In fact,our visual navigation experiments have accurate results for both indoor and outdoor environments,which can meet the requirements for real UAV applications.

Ⅴ. CONCLUSIONSIn this paper, we present a multi-sensor fusion based monocular visual navigation system for the quadrotor with limited payload, power and computational resources. This navigation system equipped with an IMU, a sonar and a monocular down-looking camera is able to work well in GPS-denied and markerless environments. Different from most of the keyframe-based methods, our visual navigation system is based on both keyframes and keypoints. We perform GPU-based SURF for feature detection and feature matching. Utilizing the omni-directional flight characteristics of the quadrotor, we propose the refined preliminary motion estimation in pixels combining images and IMU data. A multi-level judgment rule is then presented for updating the keyframes or the keypoints selectively. This is beneficial to hovering conditions and can reduce the error accumulation effectively.

For accurate motion estimation, we present a novel IMU+3P pose estimation algorithm which uses three point correspondences and IMU data. This algorithm transforms the 6-DOF pose estimation problem into the 4-DOF problem and can obtain more accurate results with less computation time. The common monocular visual navigation systems lack the metric scale, while we have solved the metric scale estimation by using the sonar sensor in the initialization of the navigation system.

We have tested the monocular visual navigation system in real indoor and outdoor environments. We have a robust and accurate flying trajectory estimation of the quadrotor. The results demonstrate that the monocular visual navigation system for the quadrotor can perform in real-time and has robust and accurate navigation results.

| [1] | Scherer S, Singh S, Chamberlain L, Elgersma M. Flying fast and low among obstacles:methodology and experiments. International Journal of Robotics Research, 2008, 27(5):549-574 |

| [2] | He R J, Bachrach A, Achtelik M, Geramifard A, Gurdan D, Perentice S, Stumpf J, Roy N. On the design and use of a micro air vehicle to track and avoid adversaries. International Journal of Robotics Research, 2010, 29(5):529-546 |

| [3] | Ahrens S, Levine D, Andrews G, How P J. Vision-based guidance and control of a hovering vehicle in unknown, GPS-denied environments. In:Proceedings of the 2009 IEEE International Conference on Robotics and Automation. Kobe, Japan:IEEE, 2009. 2643-2648 |

| [4] | Abeywardena D, Wang Z, Kodagoda S, Dissanayake G. Visual-Inertial fusion for quadrotor micro air vehicles with improved scale observability. In:Proceedings of the 2013 IEEE International Conference on Robotics and Automation. Karlsruhe, Germany:IEEE, 2013.3148-3153 |

| [5] | Meier L, Tanskanen P, Heng L, Lee G H, Fraundorfer F, Pollefeys M. PIXHAWK:a micro aerial vehicle design for autonomous flight using onboard computer vision. Auton Robot, 2012, 33(1-2):21-39 |

| [6] | Grzonka S, Grisetti G, Burgard W. Towards a navigation system for autonomous indoor flying. In:Proceedings of the 2009 IEEE International Conference on Robotics and Automation. Kobe, Japan:IEEE, 2009. 2878-2883 |

| [7] | Wang F, Cui J Q, Chen B M, Lee T H. A comprehensive UAV indoor navigation system based on vision optical flow and laser FastSLAM. Acta Automatica Sinica, 2013, 39(11):1889-1900 |

| [8] | Bachrach A, He R J, Roy N. Autonomous flight in unstructured and unknown indoor environments. In:Proceedings of the 2009 European Micro Aerial Vehicle Conference and Flight Competition. Delft, Netherland:Massachusetts Institute of Technology, 2009. 119-126 |

| [9] | Bachrach A, Prentice S, He R J, Roy N. RANGE-robust autonomous navigation in GPS-denied environments. Journal of Field Robotics, 2011, 28(5):644-666 |

| [10] | Shen S J, Michael N, Kumar V. Autonomous indoor 3D exploration with a micro-aerial vehicle. In:Proceedings of the 2012 IEEE International Conference on Robotics and Automation. Saint Paul, USA:IEEE, 2012. 9-15 |

| [11] | Fraundorfer F, Heng L, Honegger D, Lee H G, Meier L, Tanskanen P, Pollefeys M. Vision-based autonomous mapping and exploration using a quadrotor MAV. In:Proceedings of the 2012 International Conference on Intelligent Robots and Systems. Algarve, Portugal:IEEE, 2012. 4557-4564 |

| [12] | Shen S J, Mulgaonkar Y, Michael N, Kumar V. Vision-based state estimation for autonomous rotorcraft MAVs in complex environments. In:Proceedings of the 2013 IEEE International Conference on Robotics and Automation. Karlsruhe, Germany:IEEE, 2013.1758-1764 |

| [13] | Davison A J, Reid I D, Molton N D, Stasse O. MonoSLAM:Realtime single camera SLAM. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2007, 29(6):1052-1067 |

| [14] | Williams B, Hudson N, Tweddle B, Brockers R, Matthies L. Feature and pose constrained visual aided inertial navigation for computationally constrained aerial vehicles. In:Proceedings of the 2011 IEEE International Conference on Robotics and Automation. Shanghai, China:IEEE, 2011. 431-438 |

| [15] | Mouragnon E, Lhuillier M, Dhome M, Dekeyser F, Sayd P. Real time localization and 3D reconstruction. In:Proceedings of the 2006 IEEE Conference on Computer Vision and Pattern Recognition. New York, USA:IEEE, 2006. 363-370 |

| [16] | Klein G, Murray D. Parallel tracking and mapping for small AR workspaces. In:Proceedings of the 2007 IEEE and ACM International Symposium on Mixed and Augmented Reality. Nara, Japan:IEEE, 2007. 225-234 |

| [17] | Klein G, Murray D. Improving the agility of keyframe-based SLAM. In:Proceedings of the 2008 European Conference on Computer Vision. Marseille, France:Springer, 2008. 802-815 |

| [18] | Strasdat H, Montiel J M M, Davison A J. Visual SLAM:why filter? Image and Vision Computing, 2012, 30(2):65-77 |

| [19] | Zou D P, Tan P. CoSLAM:collaborative visual SLAM in dynamic environments. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2013, 35(2):354-366 |

| [20] | Blosch M, Weiss S, Scaramuzza D, Siegwart R. Vision based MAV navigation in unknown and unstructured environments. In:Proceedings of the 2010 IEEE International Conference on Robotics and Automation. Alaska, USA:IEEE, 2010. 21-28 |

| [21] | Achtelik M, Achtelik M, Weiss S, Siegwart R. Onboard IMU and monocular vision based control for MAVs in unknown in- and outdoor environments. In:Proceedings of the 2011 IEEE International Conference on Robotics and Automation. Shanghai, China:IEEE, 2011. 3056-3063 |

| [22] | Engel J, Sturm J, Cremers D. Camera-based navigation of a lowcost quadrocopter. In:Proceedings of the 2012 IEEE International Conference on Intelligent Robots and Systems. Algarve, Portugal:IEEE, 2012. 2815-2821 |

| [23] | Weiss S, Achtelik M W, Lynen S, Chli M, Siegwart R. Real-time onboard visual-inertial state estimation and self-calibration of MAVs in unknown environments. In:Proceedings of the 2012 IEEE International Conference on Robotics and Automation. Saint Paul, USA:IEEE, 2012. 957-964 |

| [24] | Nister D. An efficient solution to the five-point relative pose problem. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2004, 26(6):756-770 |

| [25] | Li H D, Hartley R. Five-point motion estimation made easy. In:Proceedings of the 2006 IEEE International Conference on Pattern Recognition. Hong Kong, China:IEEE, 2006. 630-633 |

| [26] | Fraundorfer F, Tanskanen P, Pollefeys M. A minimal case solution to the calibrated relative pose problem for the case of two known orientation angles. In:Proceedings of the 2010 European Conference on Computer Vision. Crete, Greece:Springer, 2010. 269-282 |

| [27] | Sun Feng-Mei, Wang Bo. A note on the roots distribution and stability of the PnP Problem. Acta Automatica Sinica, 2010, 36(9):1213-1219(in Chinese) |

| [28] | Quan L, Lan Z D. Linear N-point camera pose determination. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1999, 21(8):774-780 |

| [29] | Ansar A, Daniilidis K. Linear pose estimation from points or lines. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2003, 25(5):578-589 |

| [30] | Gao X S, Hou X R, Tang J L, Cheng H F. Complete solution classification for the perspective-three-point problem. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2003, 25(8):930-943 |

| [31] | Lepetit V, Moreno-Noguer F, Pascal F. EPnP:accurate non-iterative O(n) solution to the PnP problem. International Journal of Computer Vision, 2008, 81(2):151-166 |

| [32] | Lu C P, Hager G D, Mjolsness E. Fast and globally convergent pose estimation from video images. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2000, 22(6):610-622 |

| [33] | Hu Zhan-Yi, Lei Cheng, Wu Fu-Chao. A short note on P4P problem. Acta Automatica Sinica, 2001, 27(6):770-776(in Chinese) |

| [34] | Schweighofer G, Pinz A. Robust pose estimation from a planar target. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2006, 28(12):2024-2030 |

| [35] | Kukelova Z, Bujnak M, Pajdla T. Closed-form solutions to minimal absolute pose problems with known vertical direction. In:Proceedings of the 2010 Asian Conference on Computer Vision. Berlin Heidelberg:Springer, 2010.216-229 |

| [36] | Rosten E, Drummond T. Machine learning for high-speed corner detection. In:Proceedings of the 2006 European Conference on Computer Vision. Berlin Heidelberg:Springer, 2006. 430-443 |

| [37] | Mair E, Hager G D, Burschka D, Suppa M, Hirzinger G. Adaptive and generic corner detection based on the accelerated segment Test. In:Proceedings of the 2010 European Conference on Computer Vision. Crete, Greece:Springer, 2010. 183-196 |

| [38] | Calonder M, Lepetit V, Strecha C, Fua P. BRIEF:Binary robust independent elementary features. In:Proceedings of the 2010 European Conference on Computer Vision. Berlin Heidelberg:Springer, 2010. 778-792 |

| [39] | Lowe D G. Distinctive image features from scale-invariant keypoints. International Journal of Computer Vision, 2004, 60(2):91-110 |

| [40] | Bay H, Tuytelaars T, van Gool L V. SURF:speeded up robust features. Computer Vision and Image Understanding, 2008, 110(3):346-359 |

2015, Vol.2

2015, Vol.2