Distributed Self-triggered Control for Consensus of Multi-agent Systems

I. INTRODUCTION

Recently,there has been an increasing interest

in event-triggered feedback control systems[1, 2, 3, 4, 5, 6, 7, 8, 9]. In the

event-triggered control,data transmission or control actuation is

executed after the occurrence of an event generated by an

event-triggering mechanism. The event-triggering mechanism often

depends on a well-defined event-triggering condition where the

measurement error plays an essential role. When the magnitude of

the measurement error reaches the prescribed threshold,an event

is triggered. It is noted that the event-triggered technique can

reduce resource usage and provide a higher degree of robustness.

However,in many cases it requires dedicated hardware to monitor

the plant permanently,which is not available in many general

purpose devices. This motives the development of self-triggered

control for digital platforms[10, 11, 12, 13]. In self-triggered

control,the next update time of the controller is computed at the

previous one,without having to keep track of the measurement

error that triggers the execution between two consecutive update

instants. Due to its advantages,self-triggered control of

multi-agent systems (MASs) has been receiving more and more

attention. For example,in [10, 12],some novel self-triggered

control strategies for MASs were proposed,under which MASs

achieved average consensus. It can be observed from those existing

works that self-triggered controller for MASs available nowadays

are based on static state-feedback under a restrictive assumption

that all the agents$'$ states can be measured. It can also be

observed that individual agent dynamics are assumed to be a

single-integrator. Compared with the conventional event-triggered

control on MASs[14, 15],continuous measurement and

communication are not required in this paper,thus some actuation

power and communication resource might be saved. To the best of

our knowledge,there are few works either on self-triggered

control for MASs with general linear dynamics based on state

feedback or on output feedback. This motivates this study.

In this paper,we study the consensus problem of MASs with general

linear dynamics via distributed self-triggered control. Some novel

distributed self-triggered controllers are proposed for MASs$'$

asymptotically consensus. Both state feedback and output feedback

distributed self-triggered consensus problems are investigated

respectively,which help save the resource usage while guarantee a

satisfactory consensus performance. The rest of the paper is

organized as follows. In Section II,the problem under study is

formulated. Sections III and IV provide the main results of this

paper,that is,distributed self-triggered control laws based on

state feedback and output feedback schemes,respectively. An

example is presented in Section V to illustrate the effectiveness of

the proposed control methods. Finally,the conclusions are given in

Section VI.

${\bf Notations 1.}$ For vector $x={\rm col}\left( x_{1},\cdots

,x_{n}\right) \in {\bf R}^{n}$ and matrix $A=[a_{ij}]_{n\times

n}\in {\bf R}^{n\times n}$,$\Vert x\Vert$ and $\Vert A\Vert$ denote

2-norms of $x$ and $A$,respectively. A real matrix $P>0$ $(P<0)$

denotes a positive (negative)-definite matrix $P$. $M^{\rm T}$

denotes the transpose of matrix $M$. The identity matrix of order

$m$ is denoted as $I_{m}$. Moreover,matrices are assumed to have

compatible dimensions if not explicitly stated. $A\otimes B$ denotes

the Kronecker product of matrices $A$ and $B$. $\textbf{1}$ denote

the column vector with all entries equal to one. ${\bf N}$ denotes

the set of positive integers.

II. PROBLEM FORMULATION

The communication topology among agents is represented by an

undirected graph $\mathcal {G}=(\mathcal {V},\mathcal {E},\mathcal

{A})$,where $\mathcal {V}=$ $\{\upsilon_{1}$,

$\cdots,\upsilon_{N}\}$ is the set of nodes with the node indices

belonging to a finite index set $\mathcal {I}=\{1,\cdots,N\}$ and

$\mathcal {E}\subseteq \mathcal {V}\times \mathcal {V}$ is the set

of unordered pairs of nodes,called edges. Two nodes

$\upsilon_{i}$,$\upsilon_{j}$ are adjacent,or neighboring,if

$(\upsilon_{i},\upsilon_{j})$ is an edge of graph $\mathcal {G}$.

A path on $\mathcal {G}$ from node $\upsilon_{i_{1}}$ to node

$\upsilon_{i_{l}}$ is a sequence of edges of the form

$(\upsilon_{i_{k}},\upsilon_{i_{k+1}})$,$k=1,\cdots,l-1$. A

graph is called connected if there exists a path between every

pair of distinct

nodes. The adjacency matrix $\mathcal {A}=$ $[a_{ij}]$

$\in$ ${\bf R}^{N\times N}$ is the matrix with nonnegative

adjacency elements $a_{ij}$ and zero diagonal elements. If edge

$({{\upsilon_i}},{{\upsilon_j}})$ $\in$ $\mathcal {E}$,then node

$\upsilon_{j}$ is called a neighbor of node $\upsilon_{i}$ and

$a_{ij}$ $>$ $0$ $\Leftrightarrow$ $(\upsilon_{i},\upsilon_{j})\in

\mathcal {E}$. The neighbor index set of agent $\upsilon_{i}$ is

denoted by $\mathcal {N}_{i}=\{j\in\mathcal

{I}|(\upsilon_{j},\upsilon_{i})\in\mathcal {E}\}$. The degree

matrix of $\mathcal {G}$ is given by $\Delta={\rm

diag}\{\Delta_1,\Delta_2,\cdots,\Delta_N\}$,where $\Delta_i=$

$\sum\nolimits_{j\in\mathcal {N}_{i}}a_{ij}$. Matrix $\mathcal

{L}=\Delta-\mathcal {A}$ is the Laplacian matrix of graph

$\mathcal {G}$. If $\mathcal {G}$ is connected,its Laplacian

matrix has a single zero eigenvalue and the corresponding

eigenvector is $\textbf{1}$ and the eigenvalues of $\mathcal {L}$

are denoted by $0=$ $\lambda_{1}$ $<$

$\lambda_{2}\leq\cdots\leq\lambda_{N}$.

In this paper,the consensus problem for a group of $N$ identical

agents with general linear dynamics is investigated,which can be

described by

|

\begin{align}\label{1}

\left\{

\begin{array}{lll}

\dot{x}_{i}(t)=Ax_{i}(t)+Bu_{i}(t),\\[1mm]

y_{i}(t)=Cx_i(t),~~ i=1,2,\cdots,N,

\end{array}

\right.

\end{align}

|

(1) |

where $x_{i}(t)\in{\bf R}^{n}$ is the state,$u_{i}(t)\in {\bf

R}^{p}$ is the control input,$y_{i}(t)\in {\bf R}^{q}$ is the

measured output,$A$,$B$ and $C$ are constant matrices with

compatible dimensions. Assume that $(A,B)$ is controllable and

$(A,C)$ is observable. Protocol $u_i(t)$ is said to solve the

consensus problem asymptotically,if the states of agents satisfy

|

\begin{equation}\label{2}

\begin{array}{cll}

\lim\limits_{t\rightarrow

\infty}\|x_{i}(t)-x_{j}(t)\|=0,~~\forall i,j\in\mathcal {I},

~i\neq j.

\end{array}

\end{equation}

|

(2) |

In this paper,both distributed self-triggered state feedback and

output feedback control laws are proposed.

III. DISTRIBUTED SELF-TRIGGERED CONTROL BASED ON

STATE FEEDBACK

In self-triggered control,each control task triggers its next

release based on the value of the last sampled measurement. If all

the states of agents are measurable,the distributed

self-triggered state feedback control law is designed as

|

\begin{equation}\label{3}

\begin{array}{cll}

u_{i}(t)=-\mu F\omega_{i}(t_{k}^{i}),~~t\in[t_{k}^{i},t_{k+1}^{i}),

\end{array}

\end{equation}

|

(3) |

where $\omega_{i}(t_{k}^{i})=\sum\nolimits_{j\in\mathcal

{N}_{i}}a_{ij}(x_{i}(t_{k}^{i})-x_{j}(t_{k}^{i}))$,$\mu$ is a

positive scalar and $F\in{\bf R}^{p\times n}$ is the feedback gain

matrix.

${\bf Remark 1.}$ It is noted that the controller used in most

existing works on event-triggered control of MASs[2, 8, 10]

is triggered at the neighbors$'$ event time,i.e.,$u_i(t)$ $=$

$\sum\nolimits_{j\in\mathcal

{N}_{i}}a_{ij}(x_i(t_k^{i})-x_j(t_{k'}^{j}))$,where

$k'=k'(t)=\arg\max\nolimits_{l\in{\bf N}}\{l|t\geq t_{l}^{j}\}$.

Thus for each $t\in[t_{k}^{i},t_{k+1}^{i})$,$t_{k'(t)}^{j}$ is the

last event time of agent $j$. However,controller (3) is triggered

only at the event time of itself.

The sequence of execution instants for agent $i$ is denoted by

$t_0^{i},t_1^{i},\cdots$. The state measurement error is defined

as

|

\begin{align}\label{4}

e_{i}(t)=\omega_{i}(t_{k}^{i})-\omega_{i}(t),~~t\in[t_{k}^{i},t_{k+1}^{i}),

\end{align}

|

(4) |

where $~\omega_{i}(t)=\sum\nolimits_{j\in\mathcal

{N}_{i}}a_{ij}(x_{i}(t)-x_{j}(t))$. The closed-loop system of (1)

and (3) is

|

\begin{align*}

\dot{x}_{i}(t)=&\ Ax_{i}(t)-\mu BF\omega_{i}(t_{k}^{i})=\\

&\ Ax_{i}(t)-\mu BF(e_{i}(t)+\omega_{i}(t)),

\end{align*}

|

which can be written in a compact form

|

\begin{equation}\label{5}

\begin{array}{cll}

\dot{x}(t)=\bar{A}x(t)-(\mu I_{N}\otimes BF)(e(t)+\omega(t)),

\end{array}

\end{equation}

|

(5) |

where $\bar{A}=I_{N}\otimes A$,$x(t)=[x_{1}^{\rm

T}(t),\cdots,x_{N}^{\rm T}(t)]^{\rm T}$,$e(t)=$ $[e_{1}^{\rm

T}(t)$,$\cdots$,$e_{N}^{\rm T}(t)]^{\rm T}$,and $

\omega(t)=[\omega_{1}^{\rm T}(t),\cdots,\omega_{N}^{\rm T}(t)]^{\rm

T}$. By multiplying both sides of (5) by $\mathcal {L}\otimes

I_{m}$,one has

|

\begin{equation}\label{6}

\begin{array}{cll}

\dot{\omega}(t)=(\bar{A}-\mu\mathcal {L}\otimes

BF)\omega(t)-(\mu\mathcal {L}\otimes BF)e(t),

\end{array}

\end{equation}

|

(6) |

thus

|

\begin{equation}\label{7}

\begin{array}{cll}

\dot{\omega}_{i}(t)=A\omega_{i}(t)-\mu BF\sum\limits_{j\in\mathcal

{N}_{i}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j})).

\end{array}

\end{equation}

|

(7) |

Then we have the following result.

${\bf Theorem 1.}$ Assume that the communication graph $\mathcal {G}$

is connected. Given $\delta>0$,consider the controller gain $F$ $=$

$B^{\rm T}P$,where $P>0$ is a solution of the Riccati equation

|

\begin{equation}\label{8}

\begin{array}{cll}

A^{\rm T}P+PA-2PBB^{\rm T}P+\delta I=0,

\end{array}

\end{equation}

|

(8) |

and the triggering instant is chosen such that

|

\begin{align}\label{9}

&t_{k+1}^{i}\leq t_{k}^{i}+\frac{1}{\|A\|}\ln\Bigg(1+\dfrac{d_{i}}{1+d_{i}}\times\notag\\

&\qquad

\dfrac{\|A\|\|\omega_{i}(t_{k}^{i})\|}{\|A\omega_{i}(t_{k}^{i})\|+\|\mu

BF\sum\limits_{j\in\mathcal {N}_{j}}a_{ij}

(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|}\Bigg),

\end{align}

|

(9) |

where $\mu$ is sufficiently large such that $\mu\lambda_{2}\geq

1$,$d_{i}=\Big(\sigma_{i}\frac{\delta-2\mu\theta

\Delta_{i}\|PBB^{\rm

T}P\|}{\frac{2\mu\Delta_{i}}{\theta}\|PBB^{\rm T}P\|}\Big)^{1/2}$,

with $0<\sigma_{i}<1$ and

$0<\theta<\frac{\delta}{2\mu\max\{\Delta_{i}\}\|PBB^{\rm T}P\|}$,

then $N$ agents in (1) will reach consensus under the control law

(3).

${\bf Proof.}$ Consider a Lyapunov function candidate for the

closed-loop system as

|

\begin{equation}\label{10}

\begin{array}{cll}

V(t)=\omega^{\rm T}(t)\bar{P}\omega(t),

\end{array}

\end{equation}

|

(10) |

where $\bar{P}=I_{N}\otimes P$ and $P>0$. Calculating the time

derivative of $V(t)$ along the solution of (5),one has

|

\begin{align}

\dot{V}(t)=&\ \omega^{\rm T}(t)\big(I_{N}\otimes(PA+A^{\rm T}P)-2\mu\mathcal {L}\otimes PBB^{\rm T}P \big)\times\notag\\

&\ \omega(t)+2\mu\sum\limits_{i=1}^{N}\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}\omega_{i}(t)PBB^{\rm T}P\times\notag\\

&\ (e_j(t)-e_{i}(t)).

\end{align}

|

(11) |

Since $\mathcal {G}$ is connected,zero is a simple eigenvalue of

$\mathcal {L}$ and all the other eigenvalues are positive. Let

$U\in{\bf R}^{N\times N}$ be a unitary matrix such that $U^{\rm

T}\mathcal {L}U=\Lambda={\rm

diag}\{0,\lambda_{2},\cdots,\lambda_{N}\}$. The right and left

eigenvectors of $\mathcal {L}$ corresponding to the zero eigenvalue

are $\textbf{1}$ and $\textbf{1}^{\rm T}$,respectively. One can

choose $U$ $=$ $[\frac{\textbf{1}}{\sqrt{N}}~~X_1]$ and $U^{\rm

T}=\left[

\begin{array}{c}

\frac{\textbf{1}^{\rm T}}{\sqrt{N}} \\

X_2 \\

\end{array}

\right]$,with $X_{1}\in{\bf R}^{N\times(N-1)}$ and $X_{2}\in{\bf R}^{(N-1)\times N}$.

Let $\xi(t)=[\xi_{1}^{\rm T}(t),\cdots,\xi_{N}^{\rm T}(t)]^{\rm T}=(U^{\rm T}\otimes I_n)\omega(t)$.

Notice that $\omega_{1}(t)+\cdots +\omega_{N}(t)=0$. Then $\xi_1(t)$ $=$ $0$,and thus

|

\begin{align}\label{12}

\omega^{\rm T}(t)&\big(I_{N}\otimes(PA+A^{\rm T}P)-2\mu\mathcal {L}\otimes PBB^{\rm T}P \big)\omega(t)=\notag\\

&\ \xi^{\rm T}(t)\big(I_{N}\otimes(PA+A^{\rm T}P)-2\mu\Lambda\otimes PBB^{\rm T}P \big)\xi(t)=\notag\\

&\sum\limits_{i=2}^{N}\xi_{i}^{\rm T}(t)\big(PA+A^{\rm

T}P-2\mu\lambda_{i} PBB^{\rm T}P \big)\xi_{i}(t).

\end{align}

|

(12) |

By choosing sufficiently large $\mu$ such that $\mu\lambda_{2}\geq

1$,one has

|

\begin{align}

&PA+A^{\rm T}P-2\mu\lambda_{i} PBB^{\rm T}P\leq\notag\\

&\qquad PA+A^{\rm T}P-2 PBB^{\rm T}P =-\delta I,

\end{align}

|

(13) |

where the last equation is derived by using (8). It follows from

(12) that

|

\begin{align}

&\omega^{\rm T}(t)\big(I_{N}\otimes(PA+A^{\rm T}P)-2\mu\mathcal {L}\otimes PBB^{\rm T}P \big)\omega(t)\leq\notag\\

&\qquad\ -\delta \|\xi(t)\|^{2}=-\delta \|\omega(t)\|^{2}.

\end{align}

|

(14) |

From (12) to (14) and noticing $a_{ij}=a_{ji}$,one has

|

\begin{align}

\dot{V}(t)\leq& -\delta\sum\limits_{i=1}^{N}\|\omega_{i}(t)\|^{2}\;+\notag\\

&\ 2\mu\sum\limits_{i=1}^{N}\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}\omega_{i}(t)PBB^{\rm T}P(e_j(t)-e_{i}(t))\leq\notag\\

& -\delta\sum\limits_{i=1}^{N}\|\omega_{i}(t)\|^{2}+\mu\|PBB^{\rm T}P\|\sum\limits_{i=1}^{N}\Delta_{i}(\theta\|\omega_{i}(t)\|^{2}\;+\notag\\

&\ \dfrac{1}{\theta}\|e_{i}(t)\|^{2})+\mu\|PBB^{\rm T}P\|\sum\limits_{i=1}^{N}\Delta_{i}\theta\|\omega_{i}(t)\|^{2}\;+\notag\\

&\ \mu\|PBB^{\rm T}P\|\sum\limits_{i=1}^{N}\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}\dfrac{1}{\theta}\|e_{j}(t)\|^{2}=\notag\\

&\ \sum\limits_{i=1}^{N}\Big\{\big(-\delta +2\mu\|PBB^{\rm T}P\|\Delta_{i}\theta \big)\|\omega_{i}(t)\|^{2}\;+\notag\\

&\ 2\mu\|PBB^{\rm

T}P\|\dfrac{\Delta_{i}}{\theta}\|e_{i}(t)\|^{2}\Big\},

\end{align}

|

(15) |

where $\theta$ is a positive scalar defined in Theorem 1. For each

$i$,define the triggering condition as

|

\begin{equation}\label{16}

\begin{array}{cll}

\|e_{i}(t)\|\leq\dfrac{d_{i}}{1+d_{i}}\|\omega_{i}(t_{k}^{i})\|,

\end{array}

\end{equation}

|

(16) |

where $d_i$ is defined in Theorem 1. It follows from (4) and (16)

that $\|e_{i}(t)\|\leq d_{i}\|\omega_{i}(t)\|$. Thus

|

\begin{eqnarray*}

\begin{array}{cll}

\dot{V}(t)\leq\sum\limits_{i=1}^{N}(\sigma_{i}-1)(\delta-2\mu\theta\Delta_{i}\|PBB^{\rm

T}P\|)\|\omega_{i}(t)\|^{2},

\end{array}

\end{eqnarray*}

|

then $\dot{V}(t)<0$,for any $0<\sigma_{i}<1$ and

$0<\theta<\frac{\delta}{2\mu\max\nolimits_{i}\{\Delta_{i}\}\|PBB^{\rm

T}P\|}$. It follows from (4) and (7) that

|

\begin{align}\label{17}

\|\dot{e}_{i}(t)\|=&\ \|\dot{\omega}_{i}(t)\|\leq\notag\\

&\ \|A\|\|e_{i}(t)\|+\|A\omega_{i}(t_{k}^{i})\|+\notag\\

&\ \|\mu BF\sum\limits_{j\in\mathcal

{N}_{i}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|.

\end{align}

|

(17) |

So the evolution of $\|e_{i}(t)\|$ for

$t\in[t_{k}^{i},t_{k+1}^{i})$ is bounded by the solution of

|

\begin{align}\label{18}

\|\dot{p}_{i}(t)\|=&\ \|A\|\|p_{i}(t)\|+\|A\omega_{i}(t_{k}^{i})\|+\notag\\

&\ \|\mu BF\sum\limits_{j\in\mathcal

{N}_{i}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|

\end{align}

|

(18) |

with $p_{i}(t_{k}^{i})=0$. Thus the corresponding solution of (18)

is given by

|

\begin{align}\label{19}

&\|p_{i}(t)\|=\notag\\

&\qquad\dfrac{\|A\omega_{i}(t_{k}^{i})\|+\|\mu BF\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|}{\|A\|}\times\notag\\

&\qquad({\rm e}^{\|A\|(t-t_{k}^{i})}-1).

\end{align}

|

(19) |

From (16) and (19),one has that

an upper bound of the time for $\|e_{i}(t)\|$ to evolve from $0$ to $\frac{d_{i}}{1+d_{i}}\|\omega_{i}(t_{k}^{i})\|$ satisfies

|

\begin{align}\label{20}

&\dfrac{\|A\omega_{i}(t_{k}^{i})\|+\|\mu BF\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|}{\|A\|}\times\notag\\

&\qquad

(e^{\|A\|(t-t_{k}^{i})}-1)=\dfrac{d_{i}}{1+d_{i}}\|\omega_{i}(t_{k}^{i})\|.

\end{align}

|

(20) |

Thus the triggering time can be chosen as

|

\begin{align*}

&t_{k+1}^{i}\leq t_{k}^{i}+\dfrac{1}{\|A\|}\ln\Big(1+\\

&\qquad\dfrac{d_{i}}{1+d_{i}}\dfrac{\|A\|\|\omega_{i}(t_{k}^{i})\|}{\|A\omega_{i}(t_{k}^{i})\|+\|\mu

BF\sum\limits_{j\in\mathcal

{N}_{j}}a_{ij}(\omega_{i}(t_{k}^{i})-\omega_{j}(t_{k'}^{j}))\|}\Big).

\end{align*}

|

IV. DISTRIBUTED SELF-TRIGGERED OUTPUT FEEDBACK

CONTROL

If some states of the system cannot be measured,control

strategies based on state feedback cannot be used. In this case,

control schemes based on output feedback should be used. A state

observer can be designed as

|

\begin{equation}\label{21}

\left\{

\begin{array}{lll}

\dot{\hat{x}}_{i}(t)=A\hat{x}_{i}(t)+Bu_{i}(t)+L(y_{i}(t)-\hat{y}_{i}(t)),\\

\hat{y}_{i}(t)=C\hat{x}_i(t),~~ i=1,2,\cdots,N,

\end{array}

\right.

\end{equation}

|

(21) |

where $\hat{x}_{i}(t)\in{\bf R}^{n}$ is the observer state,

$\hat{y}_{i}(t)\in {\bf R}^{q}$ is the observer measured output,

$L\in {\bf R}^{n\times q}$ is a constant matrix to be designed.

Define

|

\begin{align}\label{22}

&\tilde{x}_{i}(t)=x_{i}(t)-\hat{x}_{i}(t),\notag\\%~\hat{\omega}_{i}(t)=\sum\limits_{j\in\mathcal{N}_{i}}a_{ij}(\hat{x}_{i}(t)-\hat{x}_{j}(t)),\notag\\

&\tilde{\omega}_{i}(t)= \sum\limits_{j\in\mathcal

{N}_{i}}a_{ij}(\tilde{x}_{i}(t)-\tilde{x}_{j}(t)).

\end{align}

|

(22) |

The distributed self-triggered observer-based output control law

is designed as

|

\begin{align}\label{23}

u_{i}(t)=-\mu F\hat{\omega}_{i}(t_{k}^{i}),

\end{align}

|

(23) |

where $\hat{\omega}_{i}(t_{k}^{i})=\sum\nolimits_{j\in\mathcal

{N}_{i}}a_{ij}(\hat{x}_{i}(t_{k}^{i})-\hat{x}_{j}(t_{k}^{i}))$.

The state measurement error is defined as

|

\begin{align}\label{24}

e_{i}(t)=\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{i}(t).

\end{align}

|

(24) |

By multiplying both sides of the closed-loop system of (1) and

(23) by $\mathcal {L}\otimes I_{m}$, one has

|

\begin{equation}\label{25}

\left\{

\begin{array}{lll}

\dot{\omega}(t)=\bar{A}\omega(t)-(\mu \mathcal {L}\otimes BF)\hat{\omega}(t)-(\mu \mathcal {L}\otimes BF)e(t),\\

\dot{\hat{\omega}}(t)=(\bar{A}-\mu \mathcal {L}\otimes BF)\hat{\omega}(t)-(\mu \mathcal {L}\otimes BF)e(t)+\\

\qquad\quad (I_{N}\otimes LC)\tilde{\omega}(t),\\

\dot{\tilde{\omega}}(t)=(I_{N}\otimes(A- LC))\tilde{\omega}(t),

\end{array}

\right.

\end{equation}

|

(25) |

where $\tilde{\omega}(t)=[\tilde{\omega}_{1}^{\rm

T}(t),\cdots,\tilde{\omega}_{N}^{\rm T}(t)]^{\rm T}$ and

$\hat{\omega}(t)=$ $[\hat{\omega}_{1}^{\rm T}(t)$,$\cdots$,

$\hat{\omega}_{N}^{\rm T}(t)]^{\rm T}$. It can be observed from the

third equation in (25) that $\tilde{\omega}(t)$ will approach zero

asymptotically if $L$ is designed to make $A-LC$ Hurwitz. Thus the

stability of the second equation in (25) is equivalent to the

stability of the following system

|

\begin{equation}\label{26}

\begin{array}{cll}

\dot{\hat{\omega}}(t)=(\bar{A}-\mu\mathcal {L}\otimes BF)\hat{\omega}(t)-(\mu\mathcal {L}\otimes BF)e(t).

\end{array}

\end{equation}

|

(26) |

Then we get the following result.

${\bf Theorem 2.}$ Assume that the communication graph $\mathcal

{G}$ is connected. Let $L$ be any gain matrix such that $A-LC$ is

Hurwitz. If the triggering time is chosen such that

|

\begin{equation}\label{27}

\begin{array}{cll}

t_{k+1}^{i}\leq t_{k}^{i}+\tau,

\end{array}

\end{equation}

|

(27) |

where $\tau$ satisfies the equation

|

\begin{equation}\label{28}

\begin{array}{cll}

b\tau+\dfrac{c}{a}=\delta {\rm e}^{-a\tau},

\end{array}

\end{equation}

|

(28) |

with $a=\|A\|$,$ b=\frac{\|LC\|}{\|A\|}\|\mu

BF\sum\nolimits_{j\in\mathcal

{N}_{i}}(\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{j}(t_{k'}^{j}))\|$,

$c=(\|A\|+\|LC\|(1+\frac{d_{i}}{1+d_{i}}))\|\hat{\omega}_{i}(t_{k}^{i})\|$

$+$ $(1$ - $\frac{\|LC\|}{\|A\|})\|\mu

BF\sum\nolimits_{j\in\mathcal

{N}_{i}}(\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{j}(t_{k'}^{j}))\|$,

$\delta=\frac{c}{a}+\frac{d_{i}}{1+d_{i}}\|\hat{\omega}_{i}(t_{k}^{i})\|$,

$\mu$ is sufficiently large such that $\mu\lambda_{2}\geq 1$,

$F$ $=$ $B^{\rm T}P$,with $P>0$ being a solution of the Riccati equation (8),and $d_i$ is defined in

Theorem 1,then $N$ agents in (1) will reach consensus under the control law (23).

${\bf Proof.}$ Consider a Lyapunov function candidate for the

closed-loop system as

|

\begin{eqnarray*}

\begin{array}{cll}

V(t)=\hat{\omega}^{\rm T}(t)\bar{P}\hat{\omega}(t),

\end{array}

\end{eqnarray*}

|

where $\bar{P}$ is defined in (10). Calculating the time derivative of $V(t)$ along the solution of (26),one has

|

\begin{align}\label{29}

&\dot{V}(t)=\notag\\

&\qquad \hat{\omega}^{\rm T}(t)\big(I_{N}\otimes(PA+A^{\rm T}P)-2\mu\mathcal {L}\otimes PBB^{\rm T}P \big)\hat{\omega}(t)+\notag\\

&\qquad 2\mu\sum\limits_{i=1}^{N}\sum\limits_{j\in\mathcal

{N}_{i}}a_{ij}\hat{\omega}_{i}(t)PBB^{\rm T}P(e_j(t)-e_{i}(t)).

\end{align}

|

(29) |

Similar to the analysis from (12) to (15),one has

|

\begin{align}\label{30}

\dot{V}(t)\leq&\ \sum\limits_{i=1}^{N}\Big\{\big(-\delta +2\mu\|PBB^{\rm T}P\|\Delta_{i}\theta \big)\|\hat{\omega}_{i}(t)\|^{2}+\notag\\

&\ 2\mu\|PBB^{\rm

T}P\|\frac{\Delta_{i}}{\theta}\|e_{i}(t)\|^{2}\Big\}.

\end{align}

|

(30) |

Thus for agent $i$ the triggering condition can be designed as

|

\begin{equation}\label{31}

\begin{array}{cll}

\|e_{i}(t)\|\leq\dfrac{d_{i}}{1+d_{i}}\|\hat{\omega}_{i}(t_{k}^{i})\|,

\end{array}

\end{equation}

|

(31) |

where $d_i$ is defined in Theorem 1. It follows from (24) and (30)

that

|

\begin{equation}\label{32}

\begin{array}{cll}

\|e_{i}(t)\|\leq d_{i}\|\hat{\omega}_{i}(t)\|.

\end{array}

\end{equation}

|

(32) |

Thus

$$

\dot{V}(t)\leq\sum\limits_{i=1}^{N}(\sigma_{i}-1)(\delta-2\mu\theta\Delta_{i}\|PBB^{\rm

T}P\|)\|\hat{\omega}_{i}(t)\|^{2},

$$

then $\dot{V}(t)<0$,for any $0<\sigma_{i}<1$ and

$0<\theta<\frac{\delta}{2\mu\max\nolimits_{i}\{\Delta_{i}\}\|PBB^{\rm

T}P\|}$.

It follows from (24) and (30) that

$$

\|\hat{\omega_{i}}(t_{k}^{i})-\hat{\omega_{i}}(t)\|\leq\dfrac{d_{i}}{1+d_{i}}\|\hat{\omega_{i}}(t_{k}^{i})\|,

$$

which implies

$$

\|\hat{\omega_{i}}(t)\|<\left(1+\dfrac{d_{i}}{1+d_{i}}\right)\|\hat{\omega_{i}}(t_{k}^{i})\|.

$$

One further has

|

\begin{equation}\label{33}

\begin{array}{cll}

\|\tilde{\omega_{i}}(t)\|-\|\omega_{i}(t)\|\leq\left(1+\dfrac{d_{i}}{1+d_{i}}\right)\|\hat{\omega_{i}}(t_{k}^{i})\|,

\end{array}

\end{equation}

|

(33) |

thus

|

\begin{equation}\label{34}

\begin{array}{cll}

\|\tilde{\omega_{i}}(t)\|\leq

\|\omega_{i}(t)\|+\left(1+\dfrac{d_{i}}{1+d_{i}}\right)\|\hat{\omega_{i}}(t_{k}^{i})\|.

\end{array}

\end{equation}

|

(34) |

From (24) and the first equation in (25),one has

|

\begin{equation}\label{35}

\begin{array}{cll}

\dot{\omega}_{i}(t)=A\omega_{i}(t)-\mu BF\sum\limits_{j\in\mathcal {N}_{i}}(\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{j}(t_{k'}^{j})),

\end{array}

\end{equation}

|

(35) |

then

|

\begin{align}\label{36}

\|\omega_{i}(t)\|\leq&\ \dfrac{1}{\|A\|}\|\mu BF\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}(\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{j}(t_{k}^{j}))\|\times\notag\\

&\ \left({\rm e}^{\|A\|(t-t_{k}^{i})}-1\right).

\end{align}

|

(36) |

From (24) and the second equation in (25),one has

|

\begin{align}\label{37}

\|\dot{e}_{i}(t)\|=&\ \|\dot{\hat{\omega_{i}}}(t)\|=\notag\\

&\ \|A\|\|e_{i}(t)\|+\|A\|\|\hat{\omega}_{i}(t_{k}^{i})\|+\notag\\

&\ \|\mu BF\sum\limits_{j\in\mathcal {N}_{i}}a_{ij}(\hat{\omega}_{i}(t_{k}^{i})-\hat{\omega}_{j}(t_{k'}^{j}))\|\;+\notag\\

&\ \|LC\|\|\tilde{\omega_{i}}(t)\|.

\end{align}

|

(37) |

Substituting (34) and (36) into (37),one has

|

\begin{equation}\label{38}

\begin{array}{cll}

\|\dot{e}_{i}(t)\|\leq a\|e_{i}(t)\|+b{\rm

e}^{a(t-t_{k}^{i})}+c,~~t\in[t_{k}^{i},t_{k+1}^{i}),

\end{array}

\end{equation}

|

(38) |

where $a,b$ and $c$ are defined in Theorem 2.

So the evolution of $\|e_{i}(t)\|$ for $t\in[t_{k}^{i},t_{k+1}^{i})$ is bounded by the solution of

|

\begin{equation}\label{39}

\begin{array}{cll}

\|\dot{p}_{i}(t)\|=a\|p_{i}(t)\|+b{\rm e}^{a(t-t_{k}^{i})}+c.

\end{array}

\end{equation}

|

(39) |

With $p(t_{k}^{i})=0$,the corresponding solution of (39) is

given by

|

\begin{equation}\label{40}

\begin{array}{cll}

\|p_{i}(t)\|={\rm

e}^{a(t-t_{k}^{i})}\left(\dfrac{c}{a}+b(t-t_{k}^{i})\right)-\dfrac{c}{a}.

\end{array}

\end{equation}

|

(40) |

From (31) and (40),one has that the upper bound of the time for

$\|e_{i}(t)\|$ to evolve from $0$ to

$\frac{d_{i}}{1+d_{i}}\|\hat{\omega}_{i}(t_{k}^{i})\|$ satisfies

|

\begin{equation}\label{41}

\begin{array}{cll}

{\rm

e}^{a(t-t_{k}^{i})}\left(\dfrac{c}{a}+b(t-t_{k}^{i})\right)-\dfrac{c}{a}=\dfrac{d_{i}}{1+d_{i}}\|\hat{\omega_{i}}(t_{k}^{i})\|,

\end{array}

\end{equation}

|

(41) |

which can be rewritten as

|

\begin{equation}\label{42}

\begin{array}{cll}

\dfrac{c}{a}+b\tau=\delta {\rm e}^{-a\tau},

\end{array}

\end{equation}

|

(42) |

where $\tau=t-t_{k}^{i}$. Since $\delta>\frac{c}{a}$,$\delta

{\rm e}^{-a\tau}$ approaches zero and $b\tau$ approaches positive

infinity as $\tau$ goes to infinity. Then there exists a positive

scalar $\tau$ that solves the equation. Thus the triggering time

can be chosen as $t_{k+1}^{i}\leq t_{k}^{i}+\tau$. The proof is

thus completed.

V. A SIMULATION EXAMPLE

In this section,an example is provided to validate the effectiveness of the proposed control approaches.

Consider a network described as follows

\begin{align*}

\left\{

\begin{array}{cll}

\dot{x}_{i}(t)=&\!\!\!\!\!\left[

\begin{array}{cccc}

0 & 1 & 0 & 0 \\

-48.6 & -1.25 & 48.6 & 0 \\

0 & 0 & 0 & 10 \\

1.95 & 0 & -1.95 & 0 \\

\end{array}

\right]

x_{i}(t)+\\[7mm]

&\!\!\!\!\!\left[

\begin{array}{c}

0 \\

21.6 \\

0 \\

0 \\

\end{array}

\right]

u_{i}(t),\\[7mm]

y_{i}(t)=&\!\!\!\!\!\left[

\begin{array}{cccc}

1 & 0 & 0 & 0 \\

0 & 1 & 0 & 0 \\

\end{array}

\right]x_{i}(t),~~ i=1,2,3,4.

\end{array}

\right.

\end{align*}

The Laplacian matrix of the network is given by $$\mathcal {L}=\left[

\begin{array}{rrrr}

1 & -1 & 0 & 0 \\

-1 & 3 & -1 & -1 \\

0 & -1 & 2 & -1 \\

0 & -1 & -1 & 2 \\

\end{array}

\right].

$$

Choose $\sigma_{1}=0.1$,$\sigma_{2}=0.2$,$\sigma_{3}=0.3$,

$\sigma_{4}=0.4$,$\delta=0.01$,$\theta$ $=0.002$. By solving

Riccati (8),one has $F=[0.2663$ ~$0.2214$~ $0.0499$ ~$1.2022]$.

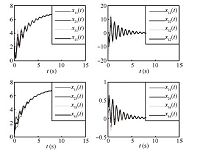

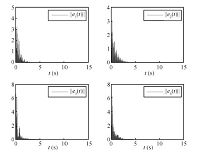

Using controller (23) and triggering condition (27),the states and

states measurement errors of multi-agent systems are shown in

Figs.1 and 2,respectively. Choose $L=\left[

\begin{array}{cccc}

10 & 0 & 0 & 0 \\

0 & 1 & 0 & 0 \\

\end{array}

\right]^{\rm T}$,$\sigma_{1}=0.1$,$\sigma_{2}$ $=$ $0.2$,$\sigma_{3}$ $=$ $0.3$,$\sigma_{4}=0.4$,$\alpha=0.01$,$\theta=0.002$,

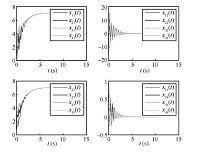

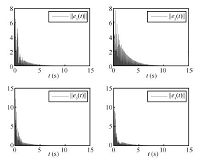

for output feedback control. Using controller (23) and triggering

condition (27),the states and states measurement errors of

multi-agent systems are shown in Figs.3 and 4,respectively.

VI. CONCLUSION

This paper provides some solutions for the consensus problem of

identical agents with an event-based control and communication. In

order to reduce the control update times and the communication

effort,distributed self-triggered cooperative control strategies

based on state feedback and output feedback are proposed,

respectively,and it is shown that consensus can be reached in

both cases for all connected communication graphs. Future work

includes extending the proposed approach to MASs with

heterogeneous dynamics and agent with uncertainty.

2014, Vol.1

2014, Vol.1